Introducing Model Monitoring & Metrics Store In Cloudera Data Science Workbench

With only about 35% of machine learning models making into production in the enterprise (IDC), it’s no wonder that production machine learning has become one of the most important focus areas for data scientists and ML engineers alike. As you may remember, we recently announced a full set of MLOps capabilities in Cloudera Machine Learning, our cloud native machine learning tool for the cloud. These capabilities are designed to tackle the most pressing challenges of production machine learning — making it easier than ever to deploy, monitor, and operate models at scale, while keeping a firm grasp on model performance, accuracy, and security no matter where models are being served.

This week we’re announcing general availability of the same first class model monitoring and serving infrastructure available in our latest release of Cloudera Data Science Workbench (CDSW) 1.8. These functionalities enable on-premises customers running CDH or HDP in their datacenter to more effectively streamline end-to-end ML workflows. In this release, we’re addressing a few key challenges:

Deployment & Serving

After models are trained and ready for prime-time, the next step is to deploy to production. Oftentimes, data scientists encounter security issues with inefficient workflows for deploying and serving models. Lack of consistency with model deployment and serving workflows can create security gaps and slow down delivery of ML use cases.

The truth is that model serving and deployment workflows have repeatable, boilerplate aspects which enterprises can automate using modern DevOps techniques. Having these workflows supported by highly secure and intuitive deployment capabilities enables ML engineers to focus on the model itself instead of the surrounding code and infrastructure.

Model Monitoring

As we’ve discussed previously, models can be defined as a piece of software used to provide predictions. They can take many forms from Python-based rest APIs to R scripts to distributed frameworks like SparkML. Model monitoring software is nothing new and tools have existed for quite some time for monitoring technical performance like response time and throughput.

Models however are unique compared to normal applications in one important way — they’re predicting the world around them which is constantly changing. Understanding how models are performing in production functionally is a difficult problem that is largely unsolved by most enterprises. This is due to the unique and complex nature of model behavior, requiring custom tooling for things like monitoring concept drift and model accuracy. Customers need a purpose-built model monitoring solution that’s flexible to handle the complexity of a model’s life cycle and behavior.

How We’re Tackling These Challenges in CDSW

CDSW 1.8 delivers purpose-built production ML capabilities to more securely and predictably deploy and serve models, then monitor those models at scale to serve the business effectively.

ML Model Monitoring Service, Metric Store, and SDK

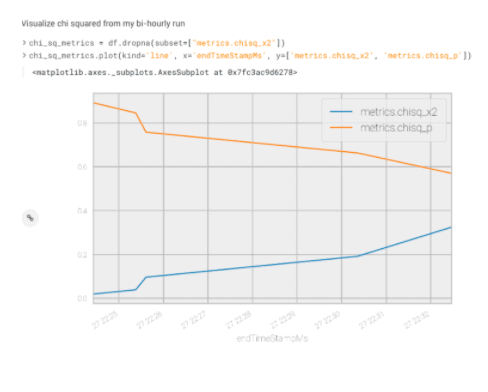

With this release, we’ve built a first-class model monitoring service targeted at addressing both the technical monitoring (latency, throughput, etc.) and functional or prediction monitoring in a repeatable, secure, and scalable way. This includes a scalable metrics store for capturing any needed metric for models during and after scoring, a unique identifier for tracking individual model predictions, a UI for visualizing these metrics, and a powerful Python SDK for tracking and analyzing custom metrics.

Superior Security for Model Deployments

Models are often leveraged in mission-critical applications where secure serving is needed to optimize uptime and avoid costly breaches. In this release, we have removed several single points of failure ensuring deployed models are more secure and trustworthy. This significantly reduces issues and eliminates unpredicted downtime in production environments.

Building The Future of Enterprise Production ML

CDSW’s MLOps features deliver open, standardized, and flexible tooling for production machine learning workflows. Whether you’re starting your ML journey or looking to scale ML use cases to the hundreds or thousands, Cloudera offers the most comprehensive tooling and in-house expertise to help you achieve your AI goals.

These new production ML capabilities complete CDSW’s offering to enable data scientists to securely collaborate in managed containerized workspaces, experiment with the IDE’s and libraries of their choice, test and tune models with experiments, then deploy the best models into production to power business applications and data products across the enterprise.

To learn more about production ML at Cloudera, watch Enabling Production ML at Sale: Hands-on with MLOps in Cloudera Machine Learning.

Great presentation.