In the second blog of the Universal Data Distribution blog series, we explored how Cloudera DataFlow for the Public Cloud (CDF-PC) can help you implement use cases like data lakehouse and data warehouse ingest, cybersecurity, and log optimization, as well as IoT and streaming data collection. A key requirement for these use cases is the ability to not only actively pull data from source systems but to receive data that is being pushed from various sources to the central distribution service.

In this third installment of the Universal Data Distribution blog series, we will take a closer look at how CDF-PC’s new Inbound Connections feature enables universal application connectivity and allows you to build hybrid data pipelines that span the edge, your data center, and one or more public clouds.

What are inbound connections?

There are two ways to move data between different applications/systems: pull and push.

When you pull data, you are taking information out of an application or system. Most applications and systems provide APIs that allow you to extract information from them. Databases offer JDBC endpoints, web applications offer REST APIs, and industry-specific applications often provide proprietary interfaces. Regardless of the type of interface, NiFi’s library of processors allows you to pull data from any system and deliver it to any destination.

If an application or system does not provide an interface to extract data, or other constraints like network connectivity prevent you from using a pull approach, a push strategy can be a good alternative. Pushing data means your source application/system is putting information into a target system. NiFi offers specific processors like ListenHTTP, ListenTCP, ListenSyslog, etc., that allow you to send data from other applications/systems to NiFi from where it gets distributed to one or more target systems. This helps you avoid building custom and hard-to-manage 1:1 integrations between applications.

While NiFi provides the processors to implement a push pattern, there are additional questions that must be answered, like:

- How is authentication handled? Who manages certificates and configures the source system and NiFi correctly?

- How do you provide a stable hostname to your source application when running a NiFi cluster with several nodes?

- Which load balancer should you pick and how should it be configured?

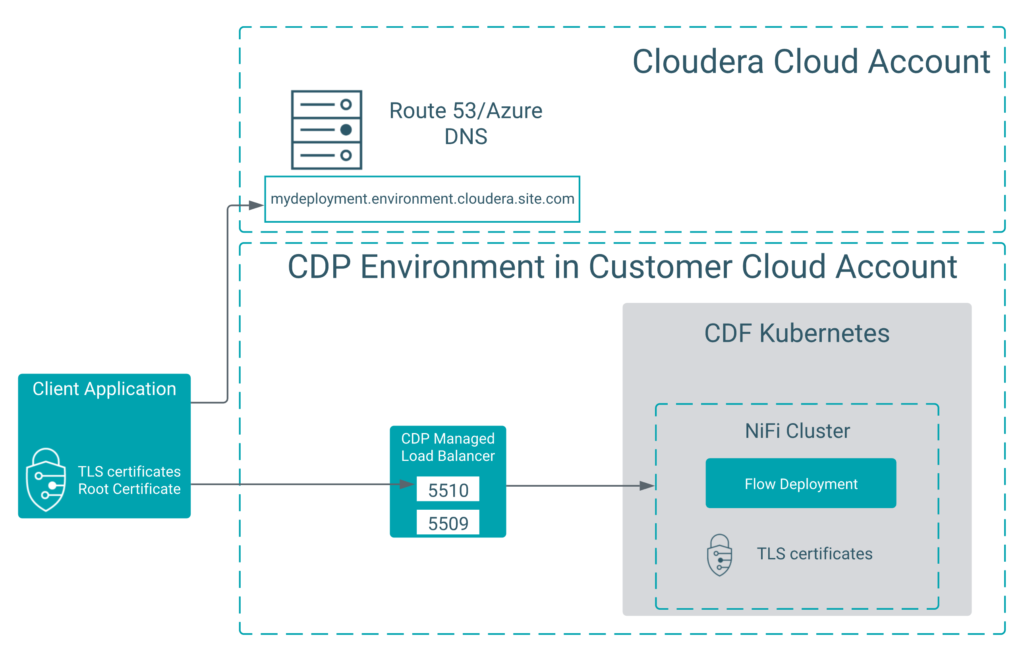

In CDF-PC, Inbound Connections allow you to support the data push approach and stream data from external source applications to a flow deployment. By assigning an inbound connection endpoint to a flow deployment, CDF-PC automatically creates a stable hostname including a load balancer fronting your deployment, a server certificate that corresponds to the hostname, and client certificates for mutual TLS authentication. It also configures NiFi accordingly.

In short, it does all the work for you to set up a secure, scalable, and robust endpoint to which you can push data to.

Figure 1: CDF-PC takes care of everything you need to provide stable, secure, scalable endpoints including load balancers, DNS entries, certificates and NiFi configuration

Using Inbound Connections to build hybrid data pipelines

A common use case for Inbound Connections are hybrid data pipelines. A data pipeline can be considered hybrid when it spans edge devices, data center deployments, or systems in multiple public clouds.

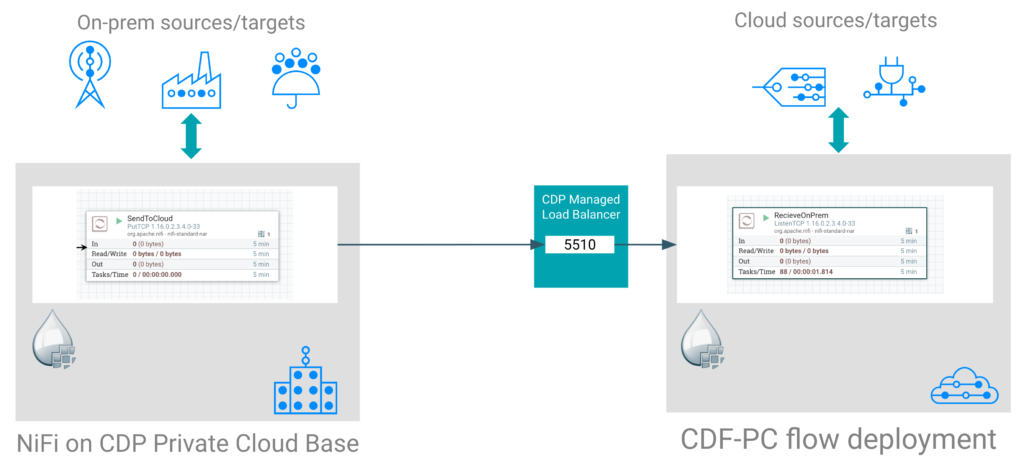

In a hybrid data pipeline that spans across the public cloud and data center, for example, NiFi deployments in the cloud are often restricted from pulling data from on-premises systems. Inbound Connections allow you to reverse the data flow direction and push data from on-premises systems to your NiFi cloud deployments.

Figure 2: Building hybrid data pipelines with on-premises and cloud NiFi deployments

Instead of configuring every on-premises application to push data to your cloud NiFi deployments, the most efficient approach is to establish a NiFi deployment on-premises (e.g. using Cloudera Flow Management) and use it to collect data from all your on-premises systems. If you need to send data to the cloud, you can now configure your NiFi flows to push data to cloud deployments using Inbound Connections. By doing this, you get several benefits:

- Avoid opening your on-premises firewall for incoming connection requests from the cloud

- A single and consistent approach to send data from on-premises to the cloud

- Data filtering, routing, and transformation capabilities on-premises and in the cloud

- The ability to choose the right protocol for your use case (HTTP, TCP, UDP)

Using Inbound Connections for universal application connectivity

With Inbound Connections enabling push-based data movement, you can now connect any application to your NiFi flow deployments, allowing you to use CDF-PC as the universal data distribution tool in the public cloud. While there are many use cases that will benefit from push-based data movement, there are well established patterns to explore in more detail.

Syslog data pipelines for cybersecurity use cases

Syslog is a standard for message logging and can be used by application developers to log information, failure, or debug messages. It is widely adopted by network device manufacturers to log event messages from routers, switches, firewalls, load balancers, and other networking equipment. Syslog typically follows an architecture of a syslog client that collects event data from the device and pushes it to a syslog server.

Since data from networking equipment plays an important role in cyber security use cases like intrusion detection and general network threat detection, organizations need to set up scalable and robust data pipelines to move the network device event data to their SIEM security information and event management (SIEM) system. With Inbound Connections and NiFi’s ListenSyslog processor, organizations can now use CDF-PC NiFi deployments, which receive the raw events for further processing, as their scalable syslog server. Using NiFi’s rich filtering, routing, and processing capabilities, users can easily filter out unnecessary data to reduce data volume, which is one of the main cost drivers of SIEM solutions. In addition to filtering, users can also transform the syslog event data into any format that might be required by applications that need to consume syslog data.

Figure 3: A scalable, robust syslog data pipeline powered by CDF-PC’s flow deployments with Inbound Connections

Kafka REST Proxy for streaming data

Apache Kafka is a popular open-source messaging platform that heavily relies on the push model to ingest data from producers into topics. Usually producers are written in Java using Kafka’s producer API, but there are cases when clients cannot use Java and require a generic way to post data through a REST API.

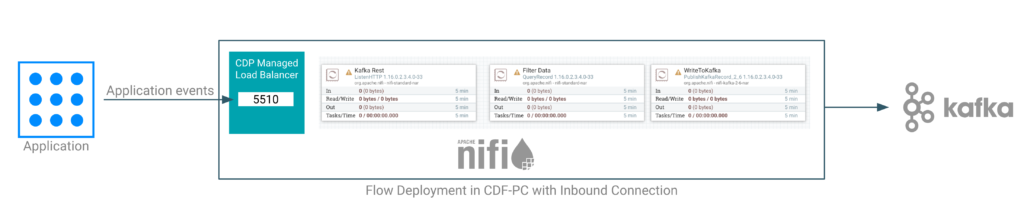

With Inbound Connections and NiFi’s ListenHTTP processor, users can now expose any NiFi flow through a stable endpoint that can be used by applications to send data to Kafka. The NiFi flow behind the Inbound Connection can not only receive data and forward it to a Kafka topic, but can perform schema validation, format conversions, and data transformation, as well as routing, filtering, and enriching the data. Just like any other flow deployment in CDF-PC, users can configure auto-scaling parameters and monitor key performance metrics to make sure the deployment can handle data bursts and growing data volumes as more applications onboard.

Figure 4: Exposing CDF-PC’s flow deployments as a Kafka RESTProxy allows you to use NiFi’s rich transformation capabilities before sending events to the destination Kafka topic

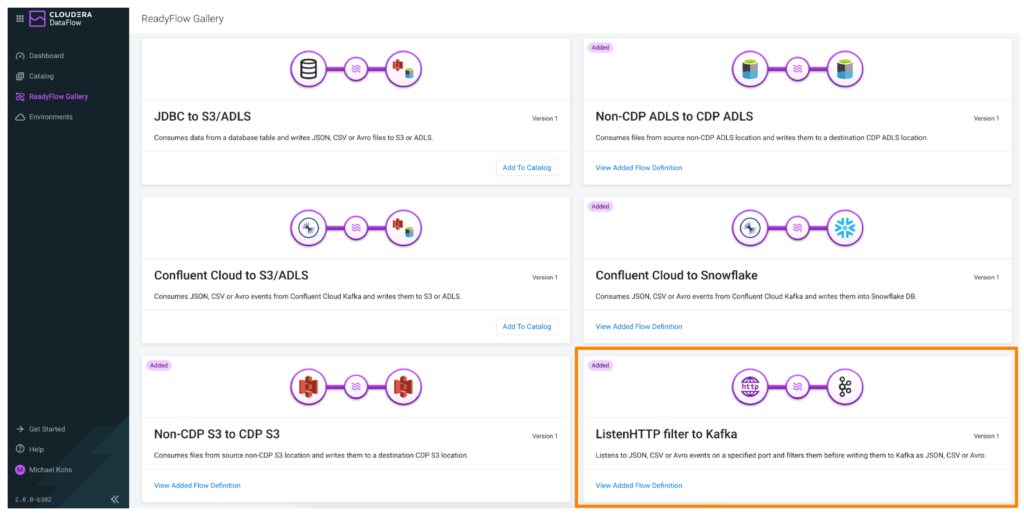

To help you get started with using CDF-PC for Kafka REST Proxy use cases, you can use the prebuilt ReadyFlow, which is available in the ReadyFlow gallery.

Figure 5: Prebuilt ReadyFlow, which is available in the ReadyFlow gallery

Summary and getting started

Inbound Connections allow organizations to implement the push pattern in a scalable, robust way unlocking hybrid data pipelines and providing universal application connectivity to their developers. CDF-PC takes care of infrastructure management, security certificate generation, and configuration, and allows NiFi users to truly focus on developing and running their data flows.

To try out Inbound Connections on your own, take our interactive product tour or sign up for a free trial.