The multi-part blog post Untangling Apache Hadoop YARN provided an overview of how the YARN scheduler works. In this post we discuss technical details around how FairScheduler Preemption works and best practices to consider when configuring it.

We also present a recent overhaul of FairScheduler Preemption in CDH 5.11 which attempts to address a number of issues as documented in YARN-4752.

Definitions

Before we begin, it is important to define some key FairScheduler queue properties.

Steady FairShare

The FairScheduler uses hierarchical queues. Queues are sibling queues when they have the same parent. The weight associated with a queue determines the amount of resources a queue deserves in relation to its sibling queues. This amount is known as Steady FairShare. The Steady FairShare is calculated at queue level and for the root queue it is the equivalent to all the cluster resources. The Steady FairShare for a queue is calculated as follows:

This number only provides visibility into resources a queue can expect, and is not used in scheduling decisions, including preemption. In other words, it is the theoretical FairShare value of a queue.

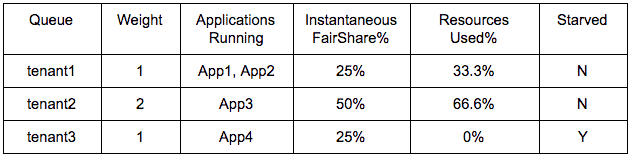

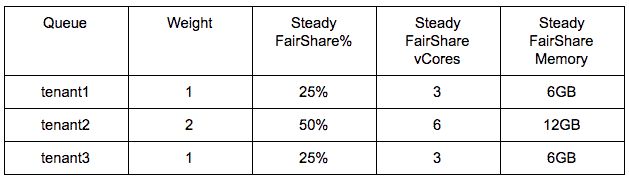

For example, let’s say YARN is managing a total of 12 vCores and 24GB memory. If three tenant queues need to be configured, then Steady FairShare is calculated based on assigned weights as follows:

Instantaneous FairShare

Instantaneous FairShare

In order to enable elasticity in a multi-tenant cluster, FairScheduler allows queues to use more resources than their Steady FairShare when a cluster has idle resources. An active queue is one with at least one running application, whereas an inactive queue is one with no running applications.

Instantaneous FairShare is the calculated FairShare value for each queue based on active queues only. This does not mean that this amount of resources are reserved for this queue. To enforce Instantaneous FairShare, you must enable preemption and configure FairShare Starvation as discussed in the next section.

This value differs from Steady FairShare in that inactive queues are not assigned any Instantaneous FairShare. The two values are equal when all queues are active.

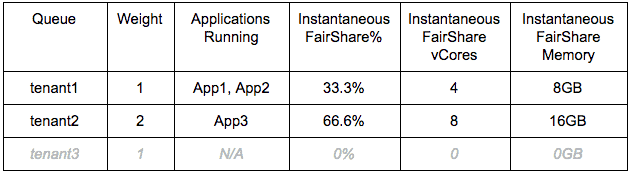

In the above example, if only tenant1 and tenant2 queues are active, then the Instantaneous FairShare will be computed as follows:

Note that the term “FairShare” is generally loosely used to refer to either type of FairShare. It is important to determine which FairShare is being referred to in any discussion. In this post, unless otherwise qualified, FairShare means Instantaneous FairShare.

Note that the term “FairShare” is generally loosely used to refer to either type of FairShare. It is important to determine which FairShare is being referred to in any discussion. In this post, unless otherwise qualified, FairShare means Instantaneous FairShare.

Minimum Share

A queue can specify that it requires a minimum amount of resources. This is also referred to as MinShare of the queue. It is important to note that this does not mean that minimum amount of resources are reserved for this queue. MinShare is only enforced when all of the following are true:

- The queue is active.

- Preemption is enabled.

- MinShare Starvation is configured as discussed in the following section.

Starvation and Preemption

Starvation and Preemption are related concepts and are best discussed together.

Due to the elasticity feature in FairScheduler, applications running in a queue can keep other applications (running in the same queue or a different queue) in a starved state. In the previous example, let’s say only tenant1 and tenant2 queues were active and used all of 33.3% and 66.6% of resources respectively. Subsequently, if tenant3 also becomes active, Instantaneous FairShare of tenants will change to 25%, 50% and 25% respectively. However, application(s) in tenant3 queue will have to wait for applications in tenant1 and/or tenant2 to release resources. Until then, tenant3 will have unmet demand or “starvation” in form of pending containers.

Preemption allows this imbalance to be adjusted in a predictable manner. It enables reclamation of resources from queues exceeding their FairShare without waiting for queues to release resources.

Enabling Preemption

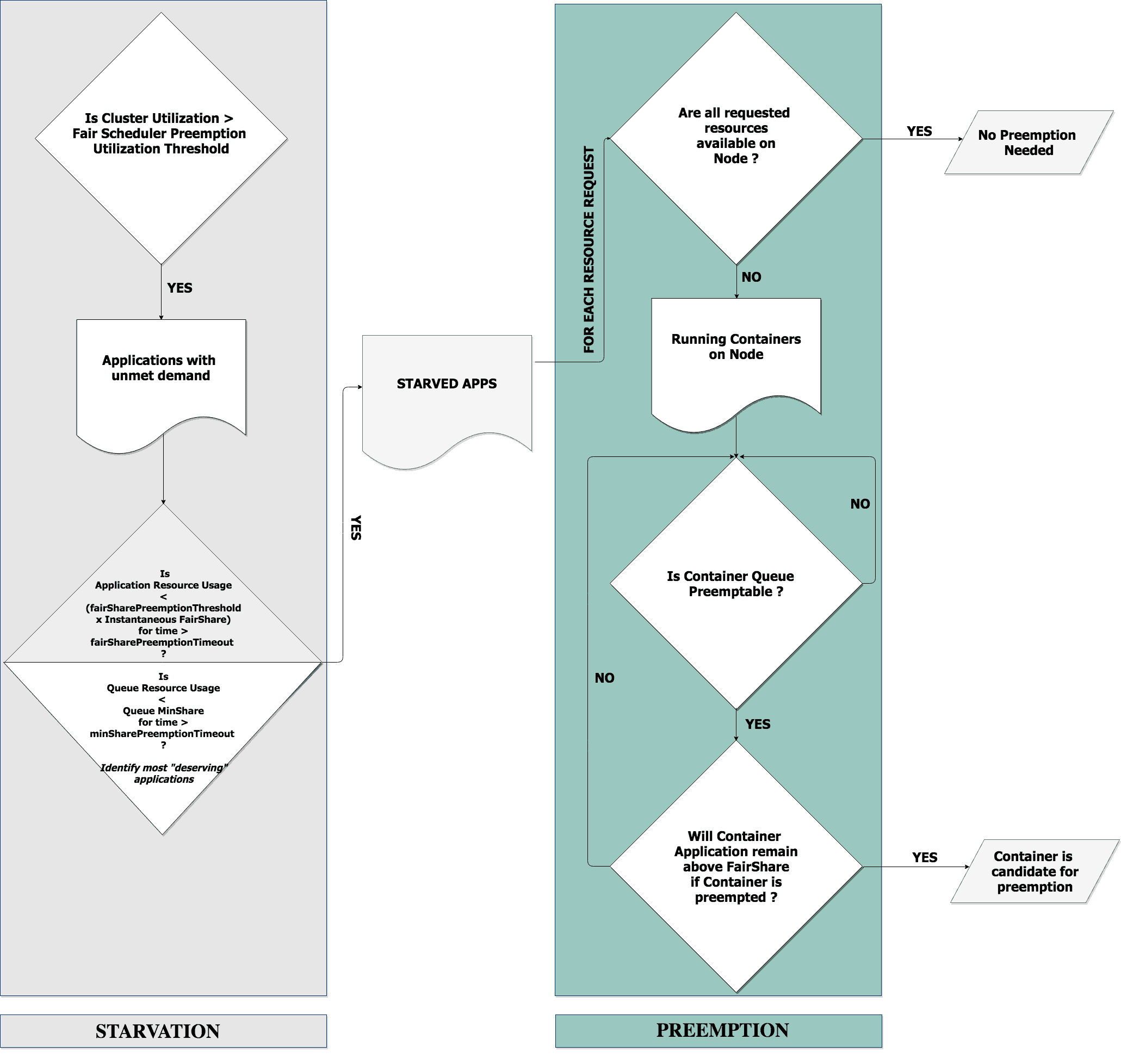

YARN FairScheduler will preempt containers running in queues that are exceeding that queue’s Instantaneous FairShare when both of the following are true:

- There are one or more starved applications.

- Preemption is enabled.

Preemption is enabled when both of the following are true:

- Preemption is enabled at YARN service level via the Enable Fair Scheduler Preemption (

yarn.scheduler.fair.preemption) configuration. - Cluster level resource utilization exceeds the Fair Scheduler Preemption Utilization Threshold (

yarn.scheduler.fair.preemption.cluster-utilization-threshold) which defaults to 80%.

The cluster utilization is an often overlooked prerequisite for preemption. Preempting containers on a cluster with low utilization causes an unnecessary container churn impacting performance. Cluster level resource utilization is calculated based percentage utilization of either vCores or memory, whichever is larger.

Note that a queue can be marked non-preemptable if needed, for example because it is a high priority queue such that resources from this queue should never be preempted. See the documentation on how to make a queue non-preemptable.

There are two types of starvation:

- FairShare Starvation

- MinShare Starvation

FairShare Starvation

An application is FairShare Starved when all of the following apply:

- The application has an unmet resource demand.

- The application’s resource usage is less than its Instantaneous FairShare.

- The application’s resource usage remains below the FairShare Preemption Threshold of its Instantaneous FairShare for at least the FairShare Preemption Timeout.

FairShare Preemption Threshold defaults to 0.5. FairShare Preemption Timeout is not set by default. It needs to be explicitly set in order for the application to be considered starved. Both parameters can be set either globally or at queue level.

As an example, let’s say FairShare Preemption Timeout is 5 seconds and FairShare Preemption Threshold is 0.8 for a queue. This implies that if preemption is enabled and conditions 1 & 2 above are met, FairScheduler will consider the application starved if application does not get 80% of its Instantaneous FairShare within 5 seconds.

It is important to note that enabling preemption does not guarantee that an application or queue will get its full Instantaneous FairShare. An application or queue is guaranteed to get enough resources to not be considered starved anymore. This means that resources only up to the FairShare Preemption Threshold of the Instantaneous FairShare are guaranteed after FairShare Preemption Timeout has elapsed. Besides requiring that preemption be enabled, this guarantee is also contingent upon Resource Manager finding containers to preempt as described later in this post.

For a high priority queue, starvation may be configured aggressively by setting FairShare Preemption Threshold value to a high ratio, the FairShare Preemption Timeout to a small duration and flagging it as non-preemptable. See this blog post for several examples on how to use preemption properties to achieve application prioritization.

Distribution of FairShare to applications within a Queue

Applications running in a queue always evenly split their queue’s Instantaneous FairShare. While FairShare is configured at the queue level, for the purpose of FairShare starvation it is the application-level FairShare that matters. This is significant because one or more applications in a queue may be FairShare-starved even though the queue itself is not FairShare-starved. An application that exceeds its Instantaneous FairShare can have its containers preempted by other FairShare-starved applications in any queue. This could thus be an application running in the same queue also.

MinShare Starvation

A queue is MinShare starved when all of the following apply:

- One or more applications in the queue have unmet resource demand.

- The queue’s resource usage is less than its MinShare.

- The queue’s resource usage remains below its MinShare for at least the MinShare Preemption Timeout.

The MinShare Preemption Timeout is not set by default. It needs to be explicitly set in order for the queue to be considered starved. The parameter can be set globally or at queue level.

Distribution of MinShare to applications within a Queue

MinShare can only be specified at the queue level. Unlike FairShare, an application cannot be MinShare-starved.

Applications within a MinShare-starved queue are ordered by demand when distributing MinShare-starved resources. This can result in some applications in a queue to continue to starve even after all minimum queue resources have been distributed. As an example, let’s say the minimum memory of a queue is configured and it is 6G below its minimum. There are 4 applications App1, App2, App3 and App4 running in the queue with unmet memory demands of 3G, 2G, 1G, and 0.5G respectively. In this scenario, App1 will get 3G from MinShare-starved resources, App2 will get 2G, App3 will get 1G, but App4 will get nothing because resources are distributed in order of demand.

Containers to Preempt

An application’s resource request may be satisfied by preempting containers running on one or more nodes. When containers on multiple nodes can satisfy this resource request, a node where the least number of Application Master containers will need to be preempted is preferred. In addition, a container can only be preempted if both of the following are true:

- Container’s application queue is configured to be preemptable.

- Killing the container will not make application resources fall below FairShare. In other words, an application which will end up with less resources than its FairShare after preemption cannot be preempted.

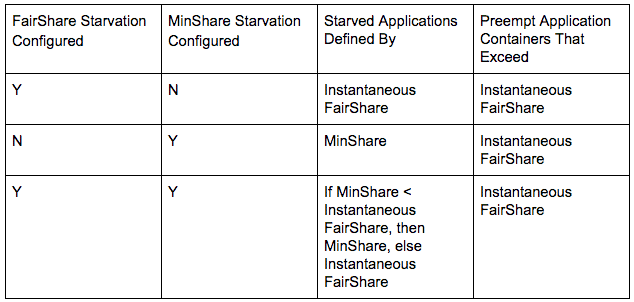

MinShare is not used when deciding what container to preempt. MinShare is only used to configure starvation. This is an important point and worth repeating. Regardless of whether FairShare or MinShare starvation is configured, only applications that exceed FairShare can be preempted, as shown in the following table:

Best Practice for Configuring Starvation

Configuring FairShare starvation is recommended. In general, configuring MinShare Starvation or minimum resources is not recommended since it adds significant complexity (and confusion) when debugging preemption issues without much added benefit:

- When MinShare starvation occurs, containers in queues exceeding MinShare but not exceeding FairShare cannot be preempted.

- MinShare-starved resources are not distributed equally to applications in a queue, but in demand order. This behaviour is different from how FairShare-starved resources are distributed.

- If a minimum resource value in a queue is greater than its FairShare value, the minimum resource value becomes queue’s FairShare. This effectively reduces the FairShare of other queues.

Queue weights configure the same percentage weight for both vCores and memory in a given queue. Some queues may need to be configured with different weights for vCores and memory. Such a scenario may be a valid reason to configure MinShare starvation. However, you should ensure that sum of all configured minimum resources is not greater than the total resources in the cluster. Otherwise, starvation and preemption behavior will be unpredictable. For example, some queues may always be MinShare-starved, but may never preempt any container since other applications may always be under their FairShare.

Preemption Overhaul

A number of improvements and critical bug fixes were made recently as part of a preemption code overhaul. These improvements are available in CDH 5.11.0 and later releases:

- Resources are preempted only if the resulting free space matches a starving application’s request. This ensures none of the preempted containers go unused.

- Application Master containers are not preempted so long as there are other containers to preempt.

No preemption related configurations were changed in the overhaul, except for yarn.scheduler.fair.preemptionInterval, which is no longer used. The behavior around choosing a container to preempt has changed. Previously, the queues most over their FairShare were preempted first. After the overhaul, containers can be preempted from any application that is over its FairShare.

Known Issues

When an application is starved, resources can be preempted from sibling applications or from applications in different queues as described earlier. However, there is no guarantee that the starved application will receive the preempted containers. It is possible that another starved application may receive those containers instead. This issue is fixed in YARN-6432 and YARN-6895, both will be available in CDH 6.0 and later releases.

Tools for Preemption Tuning

Examining FairShare and Queue Metrics

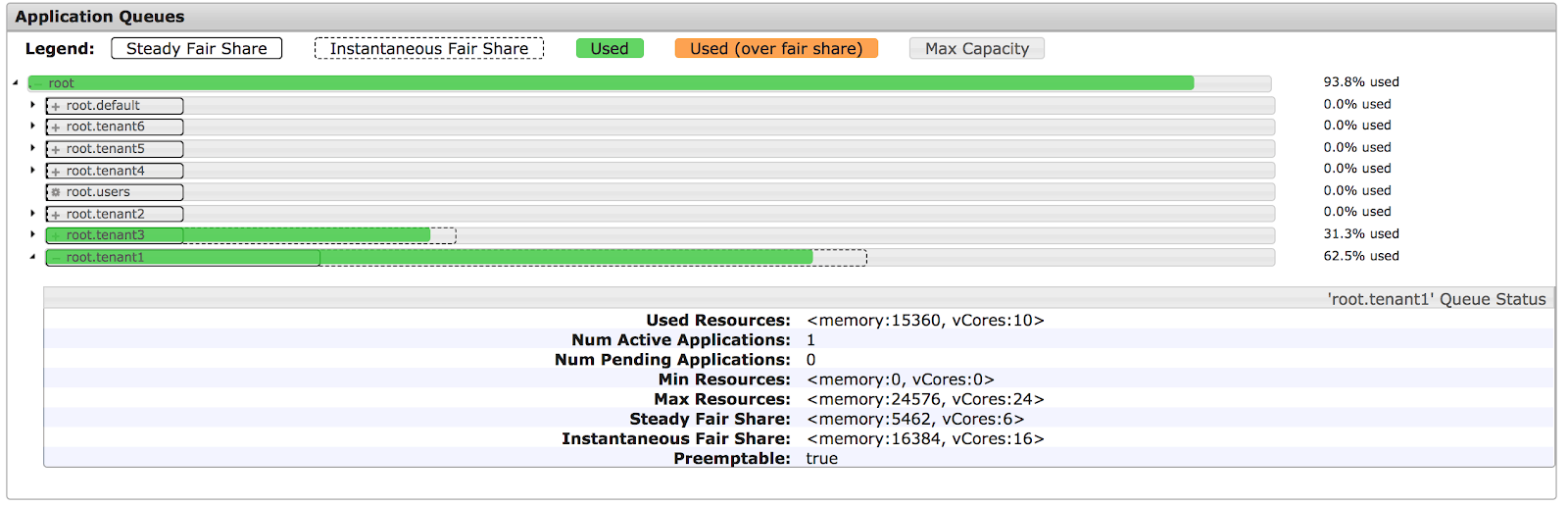

In Cloudera Manager, use the Resource Pools tab under YARN service to observe pool Steady and Instantaneous FairShare values as well as actual resource allocations.

Cloudera Manager also monitors other metrics related to queues:

- Under Cloudera Manager Reports page, click Clusters > ClusterName > Reports > Historical Applications By YARN Pool.

- Use tsquery to retrieve and plot YARN pool metrics.

Current applications, queues, and FairShares can also be examined through the Scheduler section of ResourceManager’s web interface. Please note that the usage bars as displayed in the ResourceManager web interface only show the memory usage and not the vCores usage.

Cluster Utilization Report

The Preemption Tuning tab in the Cloudera Manager Cluster Utilization Report displays plots for average Steady FairShare, Instantaneous FairShare and allocations for a selected period of time in the past whenever the YARN queue was facing resource contention. This information may be used to fine tune preemption parameters. For example, if the allocated resources are less than the FairShare during contention, consider making preemption more aggressive. See additional information on the Preemption Tuning tab here and find a video tutorial on Cluster Utilization Reports here.

We hope that this post clarifies how preemption works in YARN FairScheduler. If you have any questions, feel free to comment here, or on the community forum.

Thanks to Wilfred Spiegelenburg, Maya Wexler, Haibo Chen, Gergo Repas, Miklos Szegedi, Shriya Gupta, Jake Miller and Tristan Stevens for their reviews and comments on this post.