This blog will summarise the security architecture of a CDP Private Cloud Base cluster. The architecture reflects the four pillars of security engineering best practice, Perimeter, Data, Access and Visibility. The release of CDP Private Cloud Base has seen a number of significant enhancements to the security architecture including:

- Apache Ranger for security policy management

- Updated Ranger Key Management service

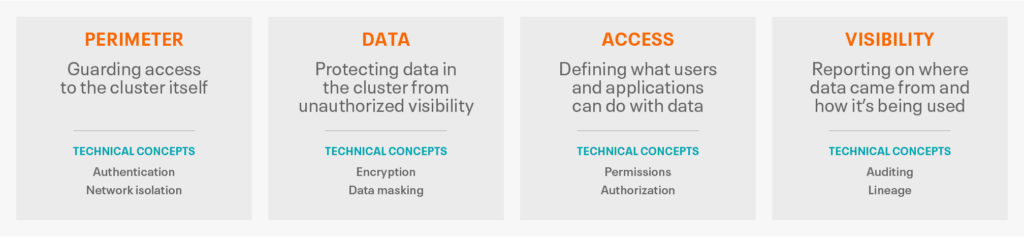

Before diving into the technologies it is worth becoming familiar with the key security principle of a layered approach that facilitates defense in depth. Each layer is defined as follows:

These multiple layers of security are applied in order to ensure the confidentiality, integrity and availability of data to meet the most robust of regulatory requirements. CDP Private Cloud Base offers 3 levels of security that implement these features

| Level | Security | Characteristics |

| 0 | Non-secure | No security configured. Non-secure clusters should never be used in production environments because they are vulnerable to any and all attacks and exploits. |

| 1 | Minimal | Configured for authentication, authorization, and auditing. Authentication is first configured to ensure that users and services can access the cluster only after proving their identities. Next, authorization mechanisms are applied to assign privileges to users and user groups. Auditing procedures keep track of who accesses the cluster (and how). |

| 2 | More | Sensitive data is encrypted. Key management systems handle encryption keys. Auditing has been setup for data in the metastore. System metadata is reviewed and updated regularly. Ideally, the cluster has been setup so that lineage for any data object can be traced (data governance). |

| 3 | Most | The secure cluster is one in which all data, both data-at-rest and data-in-transit, is encrypted and the key management system is fault-tolerant. Auditing mechanisms comply with industry, government, and regulatory standards (PCI, HIPAA, NIST, for example), and extend from the Cluster to the other systems that integrate with it. Cluster administrators are well-trained, security procedures have been certified by an expert, and the cluster can pass technical review. |

For the purposes of this document we are going to focus on the most secure level 3 security.

Security Architecture Improvements

To comply with the regulatory standards of a level 3 implementation, customers will create network topologies that ensure only privileged administrators are able to access core CDP services with applications, analysts and developers limited to appropriate gateway services such as Hue and appropriate management and monitoring web interfaces. The addition of Apache Knox significantly simplifies the provisioning of secure access with users benefiting from robust single sign on. Apache Ranger consolidates security policy management with tag based access controls, robust auditing and integration with existing corporate directories.

Logical Architecture

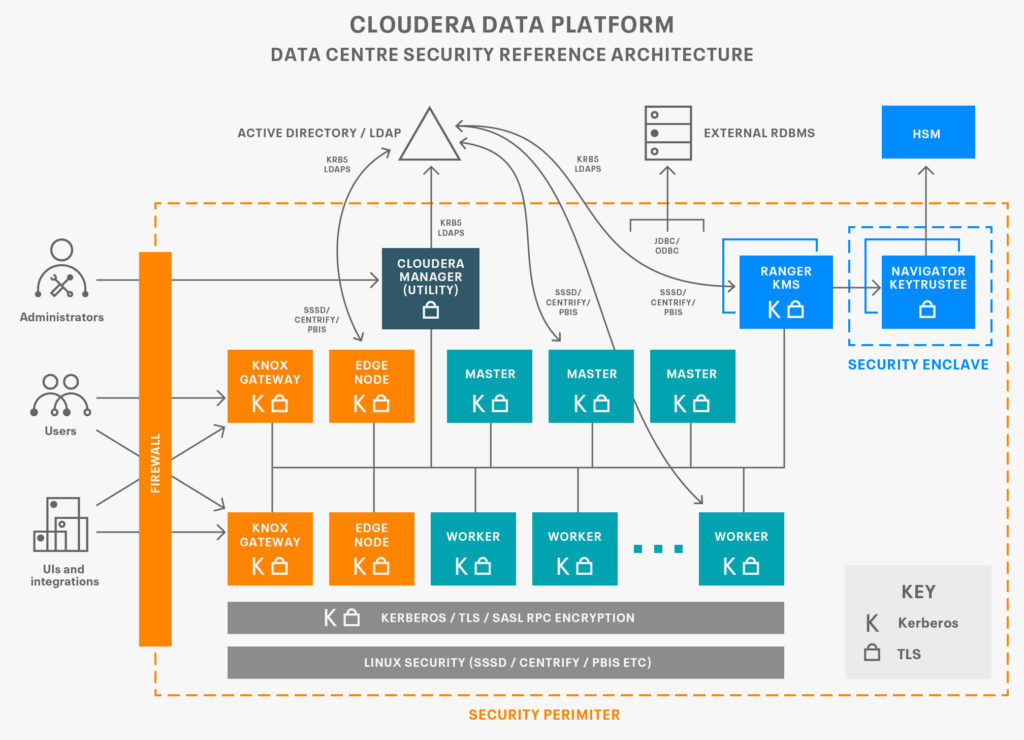

The cluster architecture can be split across a number of zones as illustrated in the following diagram:

Outside the perimeter are source data and applications, the gateway zones are where administrators and applications will interact with the core cluster zones where the work is performed. These are then supported by the data tier where configuration and key material is maintained. Services in each zone use a combination of kerberos and transport layer security (TLS) to authenticate connections and APIs calls between the respective host roles, this allows authorization policies to be enforced and audit events to be captured. Cloudera Manager will generate credentials either directly against a local KDC or via an intermediary in the corporate directory. Similarly, Cloudera Manager Auto TLS enables per host certificates to be generated and signed by established certificate authorities. If necessary, authority can be delegated to Cloudera Manager to sign the certificates in order to simplify the implementation. The following sections go into more detail as to how each aspect can be implemented.

Authentication

Typically a cluster will be integrated with an existing corporate directory, simplifying credentials management and align with well established HR procedures for managing and maintaining both user and service accounts. Kerberos is used to authenticate all service accounts within the cluster with credentials generated in the corporate directory (IDM/AD) and distributed by Cloudera Manager. To ensure that these procedures are secured it’s important that all interactions between CM, the Corporate Directory and the cluster hosts are encrypted using TLS security. Signed Certificates are distributed to each cluster host enabling service roles to mutually authenticate. This includes the Cloudera Agent process which will perform an TLS handshake with the Cloudera Manager server in order that configuration changes such as the generation and distribution of Kerberos credentials are undertaken across an encrypted channel. In addition to the CM agent, all the cluster service roles such as Impala Daemons, HDFS worker roles and management roles typically use TLS.

Kerberos

With Kerberos enabled, all cluster roles are able to authenticate each other providing they have a valid kerberos ticket. The authentication tickets are issued by the KDC, typically a local Active Directory Domain Controller, FreeIPA, or MIT Kerberos server with a trust established with the corporate kerberos infrastructure, upon presentation of valid credentials. Cloudera Manager generates and distributes these credentials to each of the service roles using an elevated privilege that is securely maintained within its database. Typically the administration privilege will enable the creation and deletion of kerberos principles within a specific organisation unit (OU) within the corporate directory. Good practice is to first enable TLS security between the Cloudera Manager and agents in order to ensure the Kerberos keytab files are transported over an encrypted connection.

Encryption

Encryption in transit is enabled using TLS security with two modes of deployment: manual or Auto TLS. For manual TLS, customers use their own scripts to generate and deploy their own certificates to the cluster hosts and the locations used are then configured in Cloudera Manager to enable their use by the cluster services.

Typically, security artifacts such as certificates will be stored on the local filesystem /opt/cloudera/security. The cloudera-deploy Ansible automation uses this method.

Auto TLS enables Cloudera Manager to act as a certificate authority, standalone or delegated by an existing corporate authority. CM can then generate, sign and then deploy the signed certificates around the cluster along with any associated truststores. Once in place, the certificates are then used to encrypt network traffic between the cluster services. There are three primary communication channels, HDFS Transparent Encryption, Data Transfer and Remote Procedure Calls, and communications with the various user Interfaces and APIs.

Encryption At Rest

In order to store sensitive data securely it is vital to ensure that data is encrypted at rest whilst also being available for processing by appropriately privileged users and services that are granted the ability to decrypt. A secure CDP cluster will feature full transparent HDFS Encryption, often with separate encryption zones for the various storage tenants and use cases. We caution against encrypting the entire HDFS, rather those directory hierarchies that require encryption.

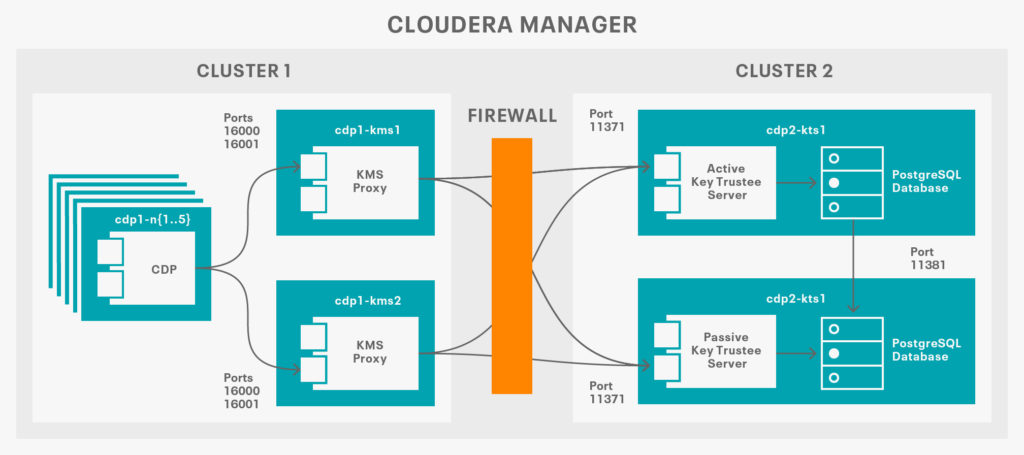

As well as HDFS other key local storage locations such as YARN and Impala scratch directories, log files can be similarly encrypted using block encryption. Both HDFS and the local filesystem can be integrated to a secure Key Trustee Service, typically deployed into a separate cluster that assumes responsibility for key management. This ensures separation of duties from the cluster administrators and the security administrators that are responsible for the encryption keys. Furthermore Key Trustee client side encryption provides defence in depth for vulnerabilities such as Apache Log4j2 CVE-2021-44228

Logical Architecture

Ranger KMS Service Overview

The Ranger KMS service consolidates the Ranger KMS service and Key Trustee KMS into a single service that can both support the Ranger KMS DB or the Key Trustee Service as the backing key store for the service. Both implementations support HMS integration with KTS backing support providing full separation of duties and a broader range of HSMs, albeit at the cost of additional complexity and hardware. It is preferable to support RKMS + KTS in order to simplify operations and run at scale. Ranger KMS supports:

- Key Management provides the ability to create, update or delete keys using the Web UI or REST APIs

- Access control provides the ability to manage access control policies within Ranger KMS. The access policies control permissions to generate or manage keys, adding another layer of security for data encrypted in HDFS.

- AuditRanger provides a full audit trace of all actions performed by Ranger KMS.

- All policies are maintained by the Ranger service.

Key Security Services

The Cloudera Security and Governance services form part of the SDX Layer of the cluster that can be deployed and managed with Cloudera Manager. These are described in more detail in the following sections.

Apache Ranger

Apache Ranger is a framework to enable, monitor and manage comprehensive data security across the platform. It is used for creating and managing policies to access data and other related objects for all services in the CDP stack and features a number of improvements over earlier versions:

- Prior to CDP, Ranger only supported users and groups in policies. In CDP, Ranger has also added the ‘role’ functionality that existed in Apache Sentry previously. A role is a collection of rules for accessing a given object. Ranger gives you the option to assign these roles to specific groups. Ranger policies can then be set for roles or directly on groups or individual users, and then enforced consistently across all the CDP services.

- One of the key changes introduced in CDP for fromer CDH users is the replacement of Apache Sentry with Apache Ranger which offers a richer range of plugins for YARN, Kafka, Hive, HDFS and Solr services.HDP customers will benefit from the new Apache Ranger RMS feature which synchronises Hive table-level authorisations with the HDFS file system previously available in Apache Sentry.

More broadly Apache Ranger provides:

- Centralized Administration interface and API

- Standardized authentication method across all components

- Supports multiple authorization methods such as Role based access control and attribute based access control

- Centralized auditing of admin and audit actions

Apache Ranger features a number of components:

- Administration portal UI and API

- Ranger Plugins, lightweight Java plugins for each component designed to pull in policies from the central admin service and stored locally. Each user request evaluated against the policy capturing the request and shipping via a separate audit event to the Ranger audit server.

- User Group Sync, synchronization of users and group memberships from UNIX and LDAP and stored by the portal for policy definition

Customers will typically deploy SSSD or similar such technology in order to resolve at the OS a user’s group memberships. The Ranger Audit server will then index those events using the cluster’s Solr Infrastructure service to facilitate analysis and reporting.

Note: Take care with the Hive privilege synchronizer. This runs within Hive and will periodically check permissions every 5 minutes for every Hive object which leads to a larger Hive memory requirements and poor Hive metastore performance where there are many thousands of Hive Objects. The feature is only required where customers are using Beeline or using SQL-based statements to manipulate and review grants. Good practice would be to at least slow down the rate of synchronization hive.privilege.synchronizer.interval=3600 (Default is 720) to check every hour or disable completely and use the Ranger UI to manage policies.

Security Zones

Security zones enable you to arrange your Ranger resource and tag based policies into specific groups in order that administration can be delegated. For example in a specific security zone:

- Security zone “finance” includes all content in a “finance” Hive database.

- Users and groups can be designated as administrators in the security zone.

- Users are allowed to set up policies only in security zones in which they are administrators.

- Policies defined in a security zone are applicable only for resources of that zone.

- A zone can be extended to include resources from multiple services such as HDFS, Hive, HBase, Kafka, etc., allowing administrators of a zone to set up policies for resources owned by their organization across multiple services.

Apache Atlas

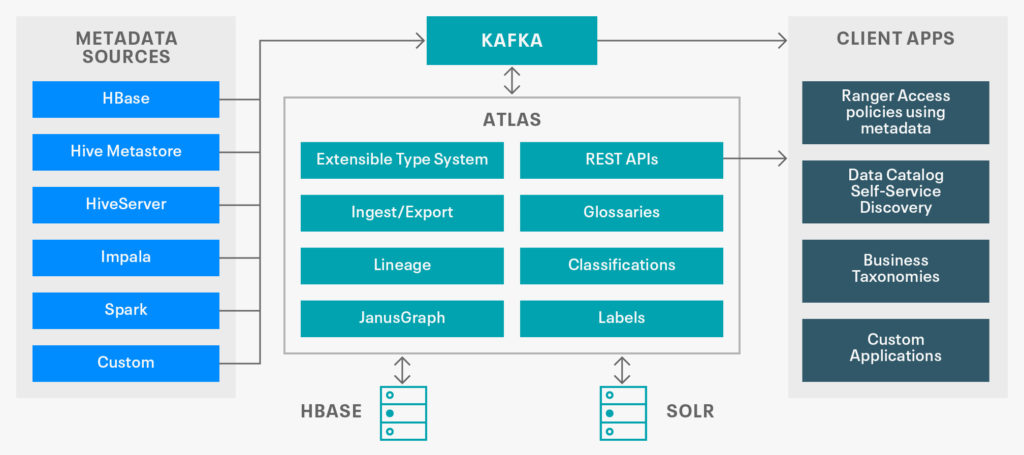

Atlas is a scalable and extensible set of core foundational governance services – enabling enterprises to effectively and efficiently meet their compliance requirements within CDP and allows integration with the whole enterprise data ecosystem.

Organizations can build a catalog of their data assets, classify and govern these assets and provide collaboration capabilities around these data assets for data scientists, analysts and the data governance team. Logically, Apache Atlas is laid out as follows:

Apache Knox

Apache Knox simplifies access to the cluster Interfaces by providing Single Sign-on for CDP Web UIs and APIs by acting as a proxy for all remote access events. Many of these APIs are useful for monitoring and issuing on the fly configuration changes.

As a stateless reverse proxy framework, Knox can be deployed as multiple instances that route requests to CDP’s REST APIs. It scales linearly by adding more Knox nodes as the load increases. A load balancer can route requests to multiple Knox instances.

Knox also intercepts REST/HTTP calls and provides authentication, authorization, audit, URL rewriting, web vulnerability removal and other security services through a series of extensible interceptor pipelines.

Each CDP cluster that is protected by Knox has its set of REST APIs represented by a single cluster specific application context path. This allows the Knox Gateway to both protect multiple clusters and present the REST API consumer with a single endpoint for access to all of the services required, across the multiple clusters.

In CDP certain providers (sso, pam, admin, manager) and topologies (cdp-proxy, cdp-proxy-api) are already pre-configured and are mostly integrated into the configuration UI of Cloudera Manager. Furthermore CDP ships with a useful pre-configured home page for users.

The cluster definition is defined within the topology deployment descriptor and provides the Knox Gateway with the layout of the cluster for purposes of routing and translation between user facing URLs and cluster internals.

Simply by writing a topology deployment descriptor to the topologies directory of the Knox installation, a new CDP cluster definition is processed, the policy enforcement providers are configured and the application context path is made available for use by API consumers. CDP Private Cloud Base comes with a preconfigured topology for all of the various cluster services which customers can extend for their environments.

Summary

We’ve summarized the key security features of a CDP Private Cloud Base cluster and subsequent posts will go into more detail with reference implementation examples of all of the key features.

- Integrate with a corporate directory

- Create and secure a Hive table:

- Describe the Ranger policy evaluation flow

- Provide an example of how to enable and protect a specific Hive Object for a group or users via a role.

- Describe a Tag based policy that can be applied to a Hive Table and the underlying hdfs directories.

- Describe the steps to map a Ranger Audit Group and roles

To find out more about Cloudera Data Platform Security visit https://docs.cloudera.com/cdp-private-cloud-base/7.1.7/cdp-security-overview/topics/security-data-lake-security.html