Learn about the design decisions behind HBase’s new support for MOBs.

Apache HBase is a distributed, scalable, performant, consistent key value database that can store a variety of binary data types. It excels at storing many relatively small values (<10K), and providing low-latency reads and writes.

However, there is a growing demand for storing documents, images, and other moderate objects (MOBs) in HBase while maintaining low latency for reads and writes. One such use case is a bank that stores signed and scanned customer documents. As another example, transport agencies may want to store snapshots of traffic and moving cars. These MOBs are generally write-once.

Unfortunately, performance can degrade in situations where many moderately sized values (100K to 10MB) are stored due to the ever-increasing I/O pressure created by compactions. Consider the case where 1TB of photos from traffic cameras, each 1MB in size, are stored into HBase daily. Parts of the stored files are compacted multiple times via minor compactions and eventually, data is rewritten by major compactions. Along with accumulation of these MOBs, I/O created by compactions will slow down the compactions, further block memstore flushing, and eventually block updates. A big MOB store will trigger frequent region splits, reducing the availability of the affected regions.

In order to address these drawbacks, Cloudera and Intel engineers have implemented MOB support in an HBase branch (hbase-11339: HBase MOB). This branch will be merged to the master in HBase 1.1 or 1.2, and is already present and supported in CDH 5.4.x, as well.

Operations on MOBs are usually write-intensive, with rare updates or deletes and relatively infrequent reads. MOBs are usually stored together with their metadata. Metadata relating to MOBs may include, for instance, car number, speed, and color. Metadata are very small relative to the MOBs. Metadata are usually accessed for analysis, while MOBs are usually randomly accessed only when they are explicitly requested with row keys.

Users want to read and write the MOBs in HBase with low latency in the same APIs, and want strong consistency, security, snapshot and HBase replication between clusters, and so on. To meet these goals, MOBs were moved out of the main I/O path of HBase and into a new I/O path.

In this post, you will learn about this design approach, and why it was selected.

Possible Approaches

There were a few possible approaches to this problem. The first approach we considered was to store MOBs in HBase with a tuned split and compaction policies—a bigger desiredMaxFileSize decreases the frequency of region split, and fewer or no compactions can avoid the write amplification penalty. That approach would improve write latency and throughput considerably. However, along with the increasing number of stored files, there would be too many opened readers in a single store, even more than what is allowed by the OS. As a result, a lot of memory would be consumed and read performance would degrade.

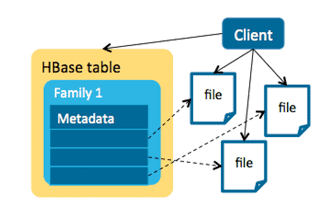

Another approach was to use an HBase + HDFS model to store the metadata and MOBs separately. In this model, a single file is linked by an entry in HBase. This is a client solution, and the transaction is controlled by the client—no HBase-side memories are consumed by MOBs. This approach would work for objects larger than 50MB, but for MOBs, many small files lead to inefficient HDFS usage since the default block size in HDFS is 128MB.

For example, let’s say a NameNode has 48GB of memory and each file is 100KB with three replicas. Each file takes more than 300 bytes in memory, so a NameNode with 48GB memory can hold about 160 million files, which would limit us to only storing 16TB MOB files in total.

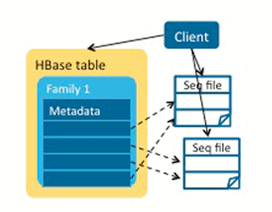

As an improvement, we could have assembled the small MOB files into bigger ones—that is, a file could have multiple MOB entries–and store the offset and length in the HBase table for fast reading. However, maintaining data consistency and managing deleted MOBs and small MOB files in compactions are difficult.

Furthermore, if we were to use this approach, we’d have to consider new security policies, lose atomicity properties of writes, and potentially lose the backup and disaster recovery provided by replication and snapshots.

HBase MOB Design

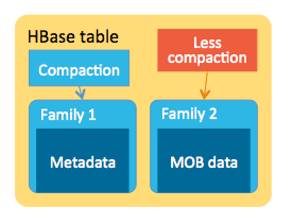

In the end, because most of the concerns around storing MOBs in HBase involve the I/O created by compactions, the key was to move MOBs out of management by normal regions to avoid region splits and compactions there.

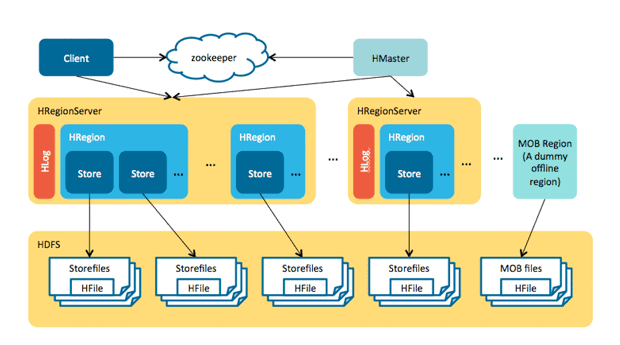

The HBase MOB design is similar to the HBase + HDFS approach because we store the metadata and MOBs separately. However, the difference lies in a server-side design: memstore caches the MOBs before they are flushed to disk, the MOBs are written into a HFile called “MOB file” in each flush, and each MOB file has multiple entries instead of single file in HDFS for each MOB. This MOB file is stored in a special region. All the read and write can be used by the current HBase APIs.

Write and Read

Each MOB has a threshold: if the value length of a cell is larger than this threshold, this cell is regarded as a MOB cell.

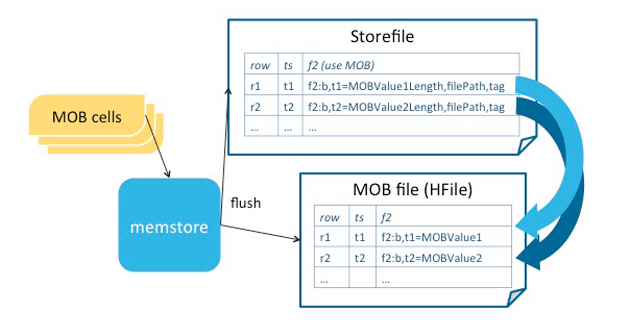

When the MOB cells are updated in the regions, they are written to the WAL and memstore, just like the normal cells. In flushing, the MOBs are flushed to MOB files, and the metadata and paths of MOB files are flushed to store files. The data consistency and HBase replication features are native to this design.

The MOB edits are larger than usual. In the sync, the corresponding I/O is larger too, which can slow down the sync operations of WAL. If there are other regions that share the same WAL, the write latency of these regions can be affected. However, if the data consistency and non-volatility are needed, WAL is a must.

The cells are permitted to move between stored files and MOB files in the compactions by changing the threshold. The default threshold is 100KB.

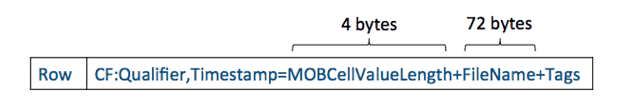

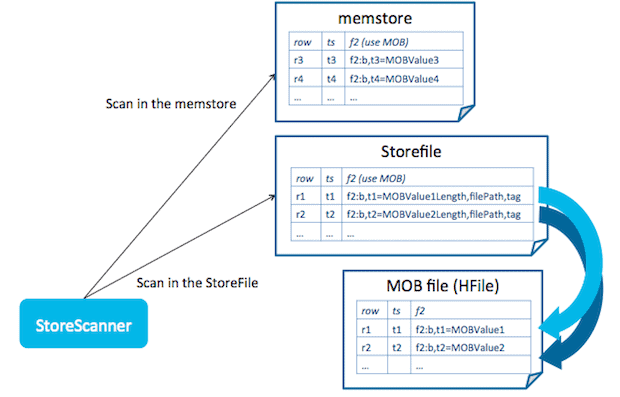

As illustrated below, the cells that contain the paths of MOB files are called reference cells. The tags are retained in the cells, so we can continue to rely on the HBase security mechanism.

The reference cells have reference tags that differentiates them from normal cells. A reference tag implies a MOB cell in a MOB file, and thus further resolving is needed in reading.

In reading, the store scanner opens scanners to memstore and store files. If a reference cell is met, the scanner reads the file path from the cell value, and seeks the same row key from that file. The block cache can be enabled for the MOB files in scan, which can accelerate seeking.

It is not necessary to open readers to all the MOB files; only one is needed when required. This random read is not impacted by the number of MOB files. So, we don’t need to compact the MOB files over and over again when they are large enough.

The MOB filename is readable, and comprises three parts: the MD5 of the start key, the latest date of cells in this MOB file, and a UUID. The first part is the start key of the region from where this MOB file is flushed. Usually, the MOBs have a user-defined TTL, so you can find and delete expired MOB files by comparing the second part with the TTL.

Snapshot

To be more friendly to the snapshot, the MOB files are stored in a special dummy region, whereby the snapshot, table export/clone, and archive work as expected.

When storing a snapshot to a table, one creates the MOB region in the snapshot, and adds the existing MOB files into the manifest. When restoring the snapshot, create file links in the MOB region.

Clean and compactions

There are two situations when MOB files should be deleted: when the MOB file is expired, and when the MOB file is too small and should be merged into bigger ones to improve HDFS efficiency.

HBase MOB has a chore in master: it scans the MOB files, finds the expired ones determined by the date in the filename, and deletes them. Thus disk space is reclaimed periodically by aging off expired MOB files.

MOB files may be relatively small compared to a HDFS block if you write rows where only a few entries qualify as MOBs; also, there might be deleted cells. You need to drop the deleted cells and merge the small files into bigger ones to improve HDFS utilization. The MOB compactions only compact the small files and the large files are not touched, which avoids repeated compaction to large files.

Some other things to keep in mind:

- Know which cells are deleted. In every HBase major compaction, the delete markers are written to a del file before they are dropped.

- In the first step of MOB compactions, these del files are merged into bigger ones.

- All the small MOB files are selected. If the number of small files is equal to the number of existing MOB files, this compaction is regarded as a major one and is called an ALL_FILES compaction.

- These selected files are partitioned by the start key and date in the filename. The small files in each partition are compacted with del files so that deleted cells could be dropped; meanwhile, a new HFile with new reference cells is generated, the compactor commits the new MOB file, and then it bulk loads this HFile into HBase.

- After compactions in all partitions are finished, if an ALL_FILES compaction is involved, the del files are archived.

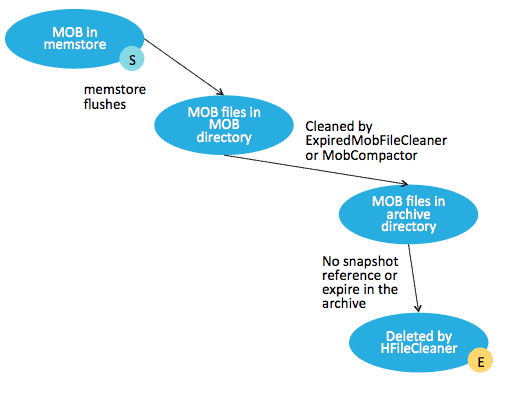

The life cycle of MOB files is illustrated below. Basically, they are created when memstore is flushed, and deleted by HFileCleaner from the filesystem when they are not referenced by the snapshot or expired in the archive.

Conclusion

In summary, the new HBase MOB design moves MOBs out of the main I/O path of HBase while retaining most security, compaction, and snapshotting features. It caters to the characteristics of operations in MOB, makes the write amplification of MOBs more predictable, and keeps low latencies in both reading and writing.

Jincheng Du is a Software Engineer at Intel and an HBase contributor.

Jon Hsieh is a Software Engineer at Cloudera and an HBase committer/PMC member. He is also the founder of Apache Flume, and a committer on Apache Sqoop.