Use the scripts and screenshots below to configure a Kerberized cluster in minutes.

Kerberos is the foundation of securing your Apache Hadoop cluster. With Kerberos enabled, user authentication is required. Once users are authenticated, you can use projects like Apache Sentry (incubating) for role-based access control via GRANT/REVOKE statements.

Taming the three-headed dog that guards the gates of Hades is challenging, so Cloudera has put significant effort into making this process easier in Hadoop-based enterprise data hubs. In this post, you’ll learn how to stand-up a one-node cluster with Kerberos enforcing user authentication, using the Cloudera QuickStart VM as a demo environment.

If you want to read the product documentation, it’s available here. You should consider this reference material; I’d suggest reading it later to understand more details about what the scripts do.

Requirements

You need the following downloads to follow along.

- The QuickStart VM, along with a corresponding VM runtime environment

- The Java Cryptography Extension (JCE) file from Oracle

- Various scripts and screen shots

Initial Configuration

Before you start the QuickStart VM, increase the memory allocation to 8GB RAM and increase the number of CPUs to two. You can get by with a little less RAM, but we will have everything including the Kerberos server running on one node.

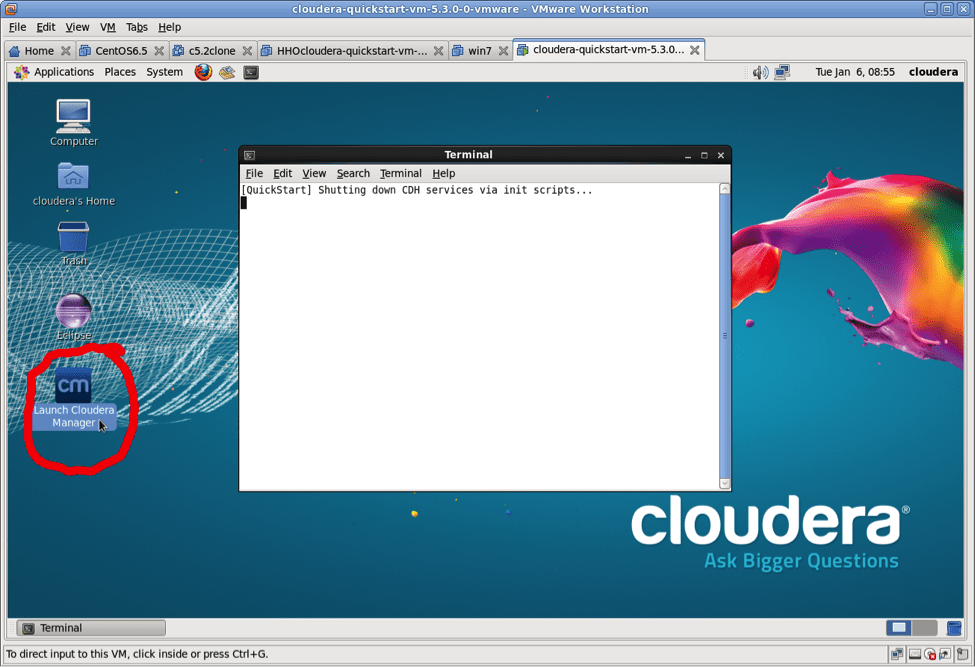

Start up the VM and activate Cloudera Manager as shown here:

Give this script some time to run, it has to restart the cluster.

KDC Install and Setup Script

The script goKerberos_beforeCM.sh does all the setup work for the Kerberos server and the appropriate configuration parameters. The comments are designed to explain what is going on inline. (Do not copy and paste this script! It contains unprintable characters that are pretending to be spaces. Rather, download it.)

#!/bin/bash # (c) copyright 2014 martin lurie sample code not supported # reminder to activate CM in the quickstart echo Activate CM in the quickstart vmware image echo Hit enter when you are ready to proceed # pause until the user hits enter read foo # for debugging - set -x # fix the permissions in the quickstart vm # may not be an issue in later versions of the vm # this fixes the following error # failed to start File /etc/hadoop must not be world # or group writable, but is 775 # File /etc must not be world or group writable, but is 775 # # run this as root # to become root # sudo su - cd /root chmod 755 /etc chmod 755 /etc/hadoop # install the kerberos components yum install -y krb5-server yum install -y openldap-clients yum -y install krb5-workstation # update the config files for the realm name and hostname # in the quickstart VM # notice the -i.xxx for sed will create an automatic backup # of the file before making edits in place # # set the Realm # this would normally be YOURCOMPANY.COM # in this case the hostname is quickstart.cloudera # so the equivalent domain name is CLOUDERA sed -i.orig 's/EXAMPLE.COM/CLOUDERA/g' /etc/krb5.conf # set the hostname for the kerberos server sed -i.m1 's/kerberos.example.com/quickstart.cloudera/g' /etc/krb5.conf # change domain name to cloudera sed -i.m2 's/example.com/cloudera/g' /etc/krb5.conf # download UnlimitedJCEPolicyJDK7.zip from Oracle into # the /root directory # we will use this for full strength 256 bit encryption mkdir jce cd jce unzip ../UnlimitedJCEPolicyJDK7.zip # save the original jar files cp /usr/java/jdk1.7.0_67-cloudera/jre/lib/security/local_policy.jar local_policy.jar.orig cp /usr/java/jdk1.7.0_67-cloudera/jre/lib/security/US_export_policy.jar US_export_policy.jar.orig # copy the new jars into place cp /root/jce/UnlimitedJCEPolicy/local_policy.jar /usr/java/jdk1.7.0_67-cloudera/jre/lib/security/local_policy.jar cp /root/jce/UnlimitedJCEPolicy/US_export_policy.jar /usr/java/jdk1.7.0_67-cloudera/jre/lib/security/US_export_policy.jar # now create the kerberos database # type in cloudera at the password prompt echo suggested password is cloudera kdb5_util create -s # update the kdc.conf file sed -i.orig 's/EXAMPLE.COM/CLOUDERA/g' /var/kerberos/krb5kdc/kdc.conf # this will add a line to the file with ticket life sed -i.m1 '/dict_file/a max_life = 1d' /var/kerberos/krb5kdc/kdc.conf # add a max renewable life sed -i.m2 '/dict_file/a max_renewable_life = 7d' /var/kerberos/krb5kdc/kdc.conf # indent the two new lines in the file sed -i.m3 's/^max_/ max_/' /var/kerberos/krb5kdc/kdc.conf # the acl file needs to be updated so the */admin # is enabled with admin privileges sed -i 's/EXAMPLE.COM/CLOUDERA/' /var/kerberos/krb5kdc/kadm5.acl # The kerberos authorization tickets need to be renewable # if not the Hue service will show bad (red) status # and the Hue “Kerberos Ticket Renewer” will not start # the error message in the log will look like this: # kt_renewer ERROR Couldn't renew # kerberos ticket in # order to work around Kerberos 1.8.1 issue. # Please check that the ticket for 'hue/quickstart.cloudera' # is still renewable # update the kdc.conf file to allow renewable sed -i.m3 '/supported_enctypes/a default_principal_flags = +renewable, +forwardable' /var/kerberos/krb5kdc/kdc.conf # fix the indenting sed -i.m4 's/^default_principal_flags/ default_principal_flags/' /var/kerberos/krb5kdc/kdc.conf # There is an addition error message you may encounter # this requires an update to the krbtgt principal # 5:39:59 PM ERROR kt_renewer # #Couldn't renew kerberos ticket in order to work around # Kerberos 1.8.1 issue. Please check that the ticket # for 'hue/quickstart.cloudera' is still renewable: # $ kinit -f -c /tmp/hue_krb5_ccache #If the 'renew until' date is the same as the 'valid starting' # date, the ticket cannot be renewed. Please check your # KDC configuration, and the ticket renewal policy # (maxrenewlife) for the 'hue/quickstart.cloudera' # and `krbtgt' principals. # # # we need a running server and admin service to make this update service krb5kdc start service kadmin start kadmin.local <# cloudera-scm/admin@YOUR-LOCAL-REALM.COM # add the admin user that CM will use to provision # kerberos in the cluster kadmin.local <

Cloudera Manager Kerberos Wizard

After running the script, you now have a working Kerberos server and can secure the Hadoop cluster. The wizard will do most of the heavy lifting; you just have to fill in a few values.

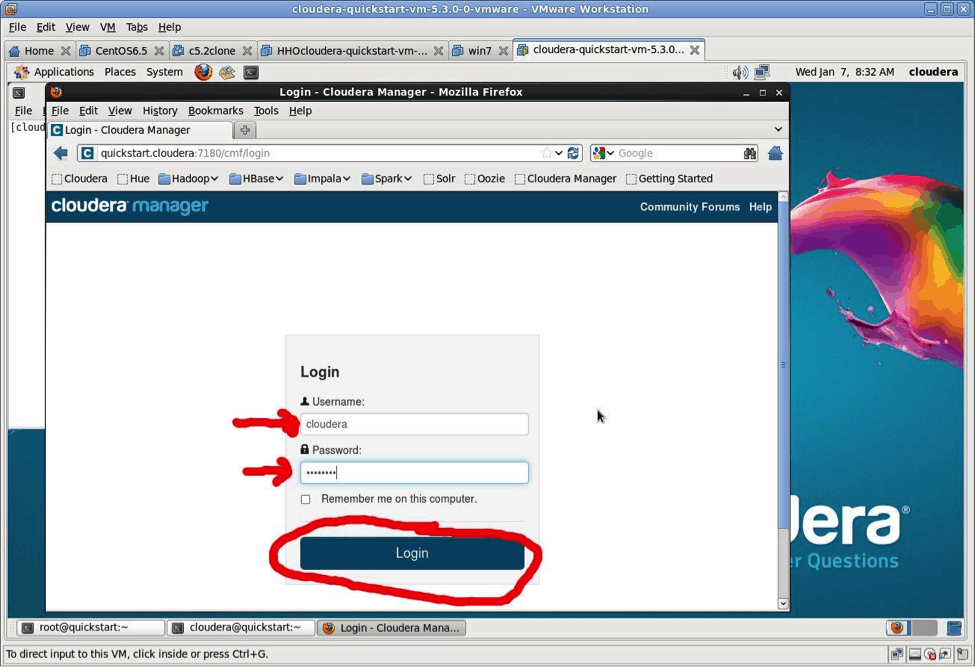

To start, log into Cloudera Manager by going to http://quickstart.cloudera:7180 in your browser. The userid is cloudera and the password is cloudera. (Almost needless to say but never use “cloudera” as a password in a real-world setting.)

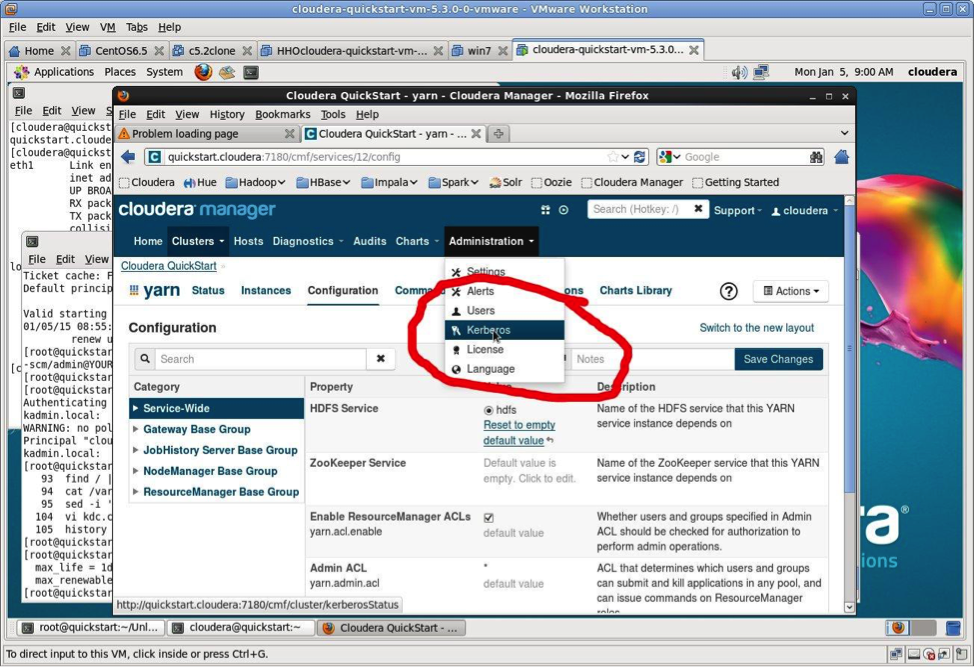

There are lots of productivity tools here for managing the cluster but ignore them for now and head straight for the Administration > Kerberos wizard as shown in the next screenshot.

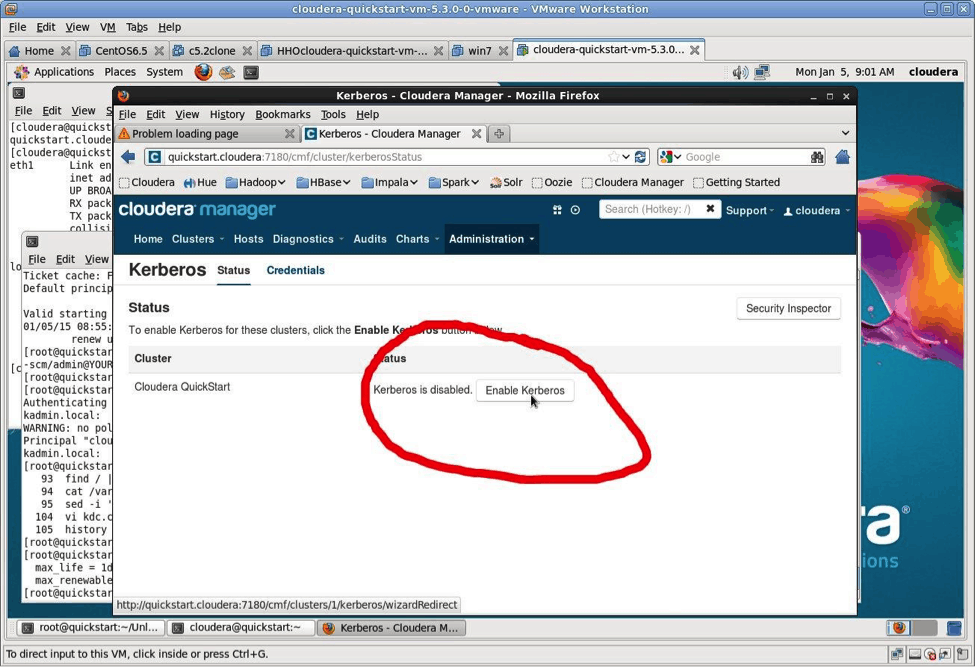

Click on the “Enable Kerberos” button.

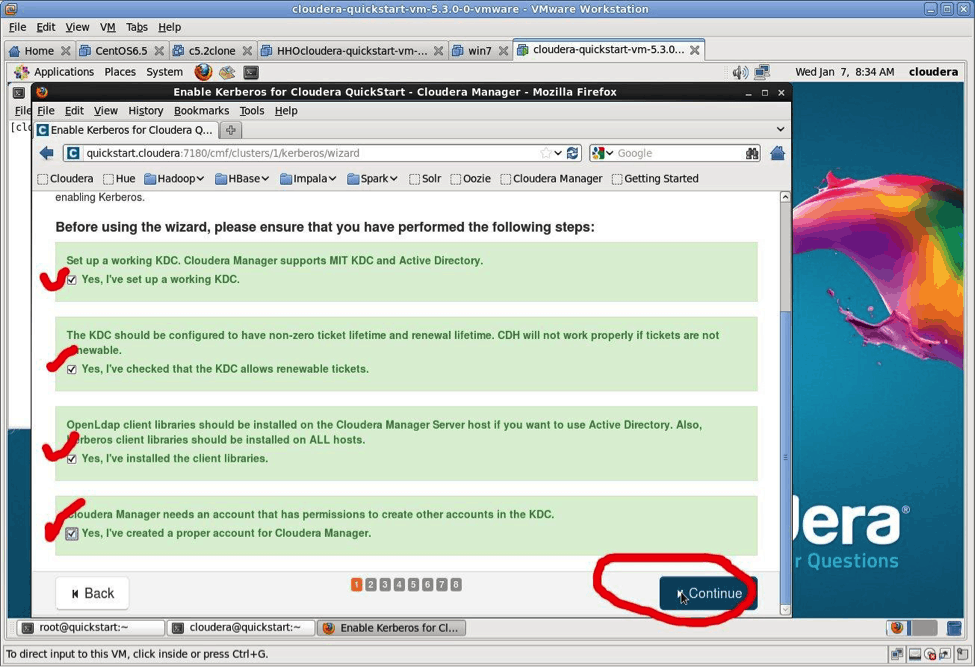

The four checklist items were all completed by the script you’ve already run. Check off each item and select “Continue.”

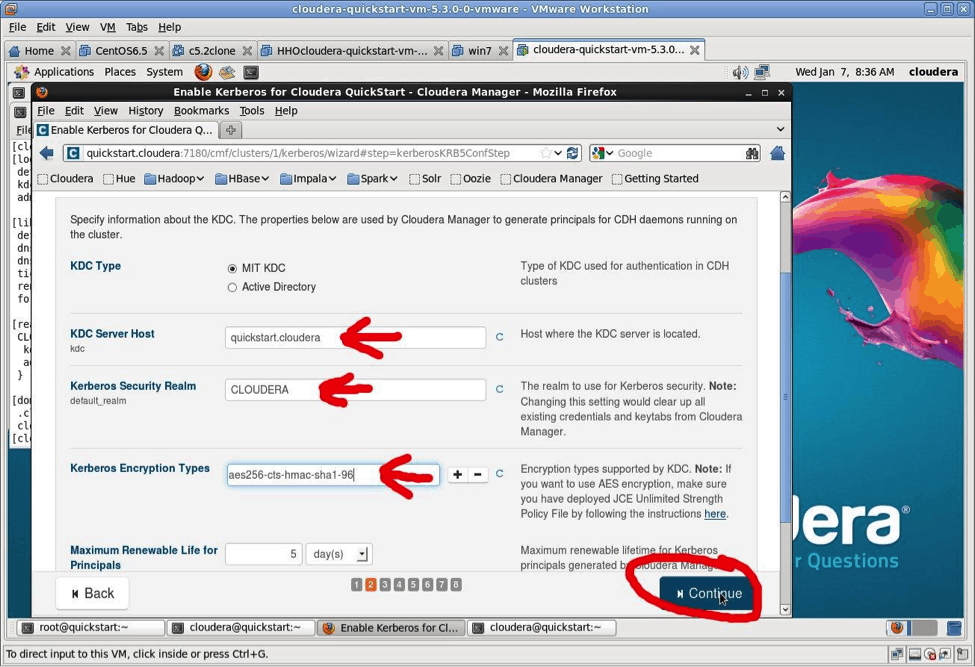

The Kerberos Wizard needs to know the details of what the script configured. Fill in the entries as follows:

- KDC Server Host:

quickstart.cloudera - Kerberos Security Realm:

CLOUDERA - Kerberos Encryption Types:

aes256-cts-hmac-sha1-96

Click “Continue.”

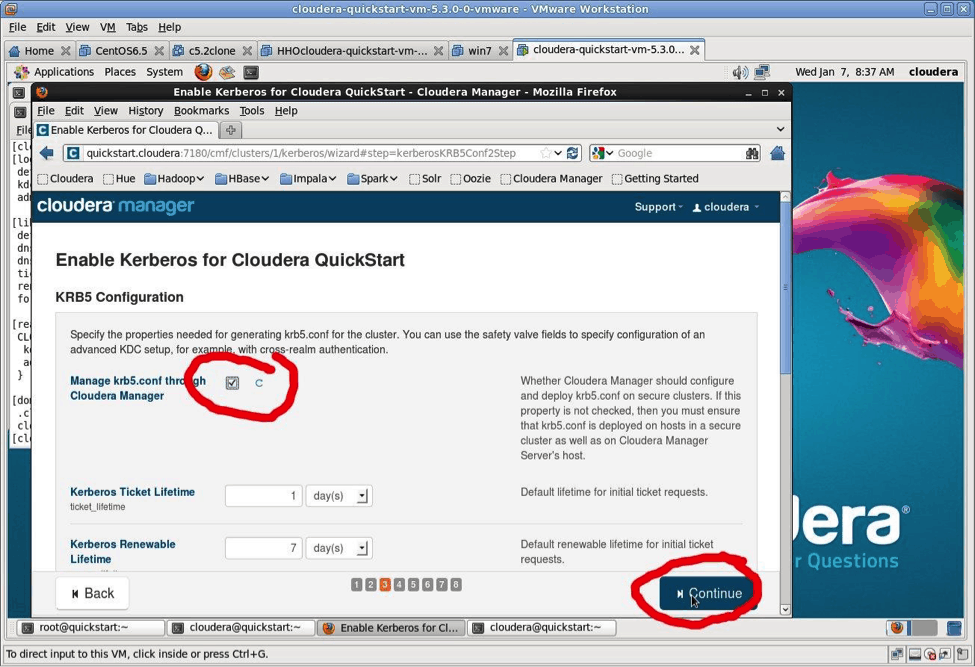

Do you want Cloudera Manager to manage the krb5.conf files in your cluster? Remember, the whole point of this blog post is to make Kerberos easier. So, please check “Yes” and then select “Continue.”

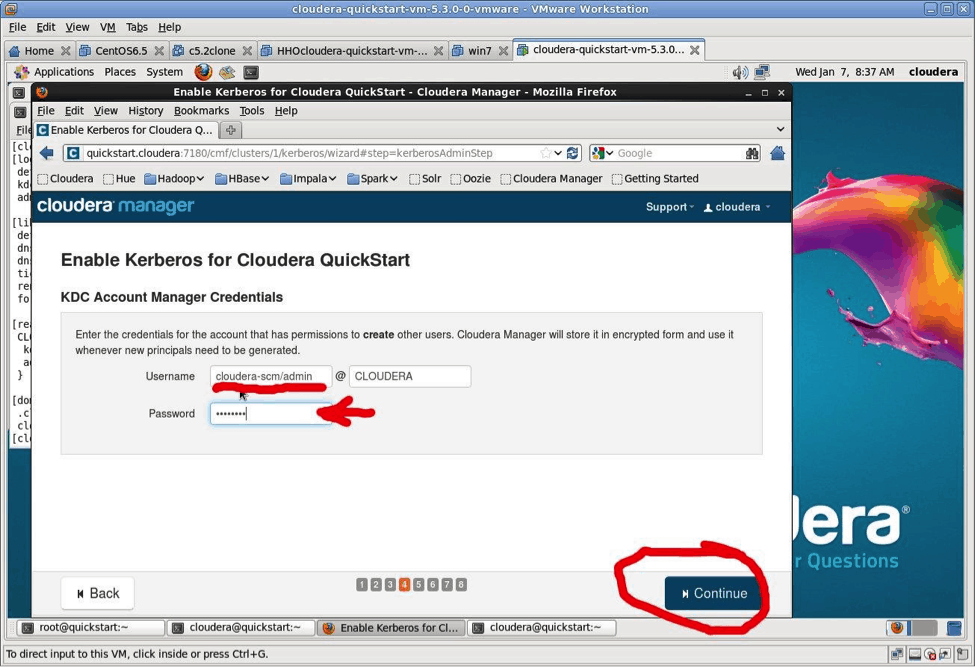

The Kerberos Wizard is going to create Kerberos principals for the different services in the cluster. To do that it needs a Kerberos Administrator ID. The ID created is: cloudera-scm/admin@CLOUDERA.

The screen shot shows how to enter this information. Recall the password is: cloudera.

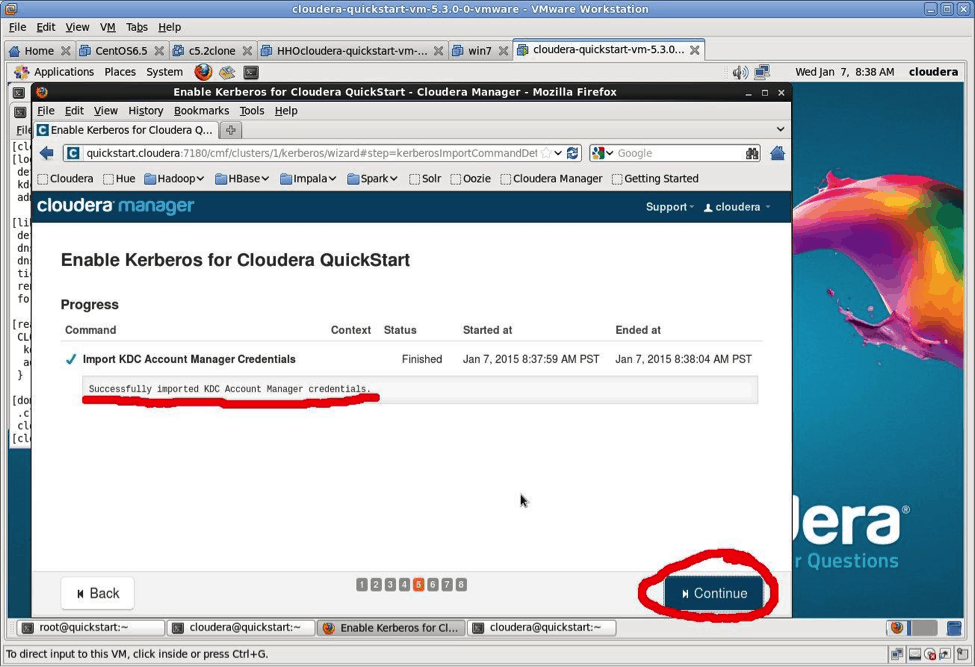

The next screen provides good news. It lets you know that the wizard was able to successfully authenticate.

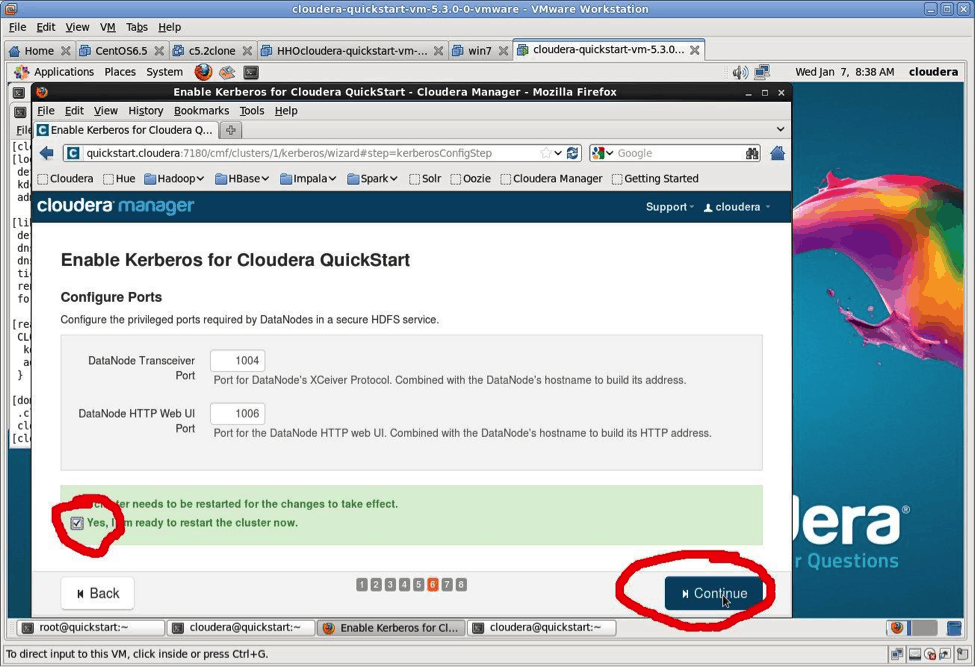

OK, you’re ready to let the Kerberos Wizard do its work. Since this is a VM, you can safely select “I’m ready to restart the cluster now” and then click “Continue.” You now have time to go get a coffee or other beverage of your choice.

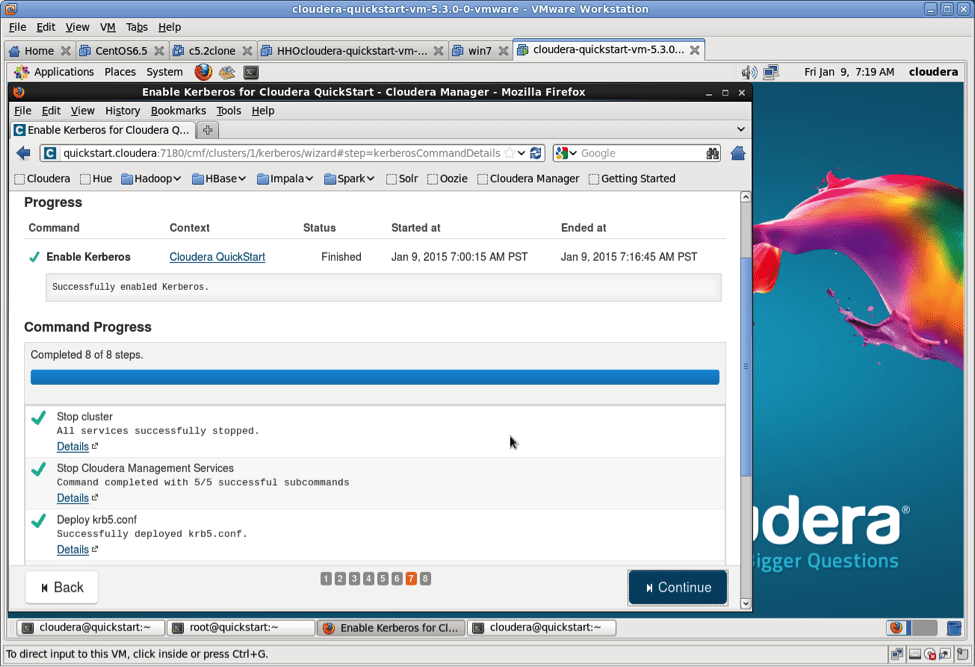

How long does that take? Just let it work.

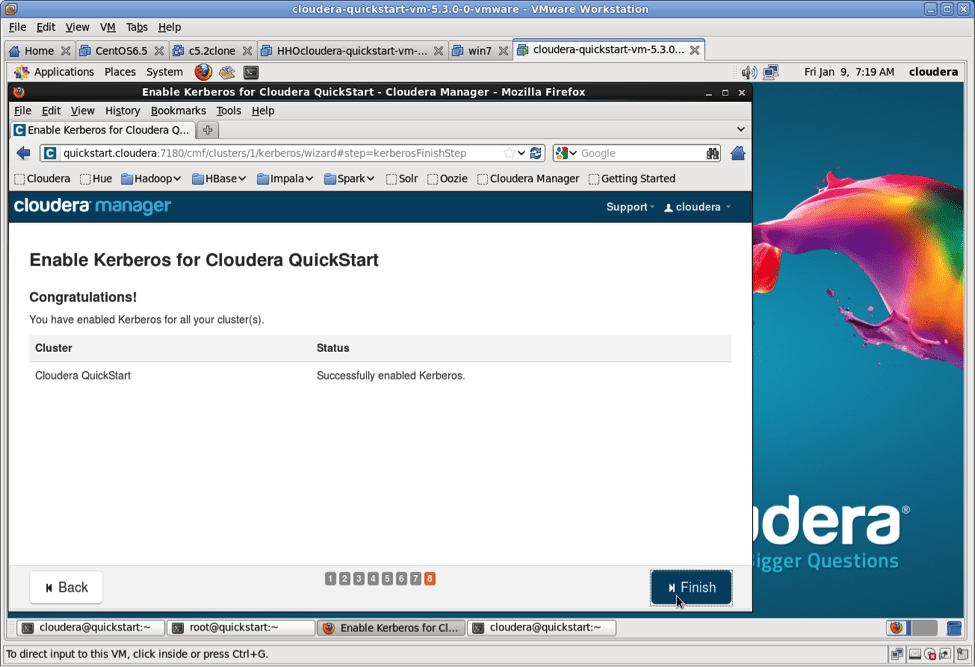

Congrats, you are now running a Hadoop cluster secured with Kerberos.

Kerberos is Enabled. Now What?

The old method of su - hdfs will no longer provide administrator access to the HDFS filesystem. Here is how you become the hdfs user with Kerberos:

kinit hdfs@CLOUDERA

Now validate you can do hdfs user things:

hadoop fs -mkdir /eraseme hadoop fs -rmdir /eraseme

Next, invalidate the Kerberos token so as not to break anything:

kdestroy

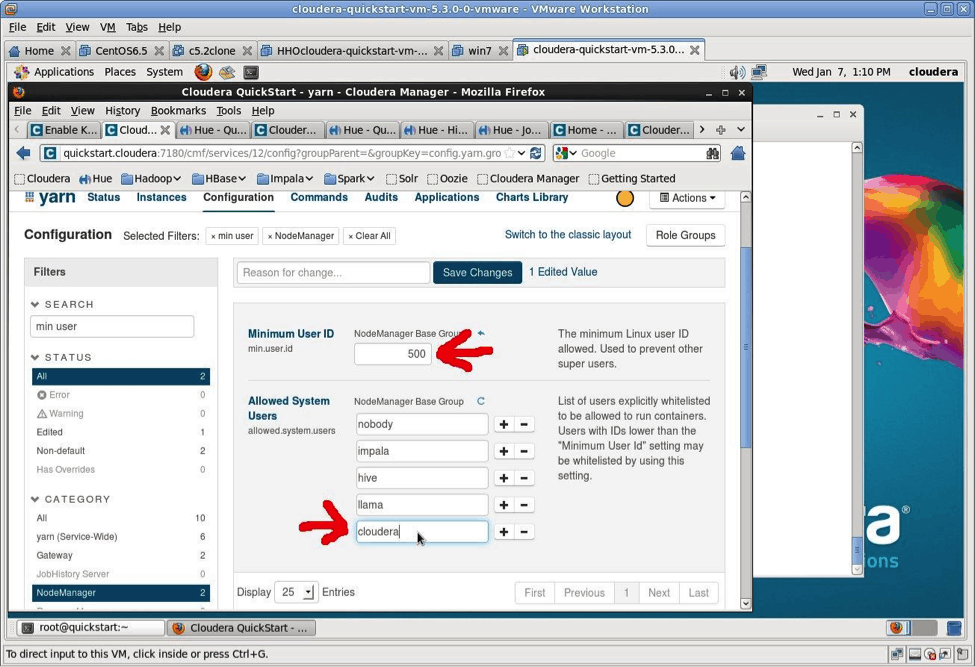

The min.user parameter needs to be fixed per the message below:

Requested user cloudera is not whitelisted and has id 501, which is below the minimum allowed 1000 Must kinit prior to using cluster

This is the error message you get without fixing min.user.id:

Application initialization failed (exitCode=255) with output: Requested user cloudera is not whitelisted and has id 501, which is below the minimum allowed 1000

Save the changes shown above and restart the YARN service. Now validate that the cloudera user can use the cluster:

kinit cloudera@CLOUDERA hadoop jar /usr/lib/hadoop-0.20-mapreduce/hadoop-examples.jar pi 10 10000

If you forget to kinit before trying to use the cluster you’ll get the errors below. The simple fix is to use kinit with the principal you wish to use.

# force the error to occur by eliminating the ticket with kdestroy [cloudera@quickstart ~]$ kdestroy [cloudera@quickstart ~]$ hadoop fs -ls 15/01/12 08:21:33 WARN security.UserGroupInformation: PriviledgedActionException as:cloudera (auth:KERBEROS) cause:javax.security.sasl.SaslException: GSS initiate failed [Caused by GSSException: No valid credentials provided (Mechanism level: Failed to find any Kerberos tgt)] 15/01/12 08:21:33 WARN ipc.Client: Exception encountered while connecting to the server : javax.security.sasl.SaslException: GSS initiate failed [Caused by GSSException: No valid credentials provided (Mechanism level: Failed to find any Kerberos tgt)] 15/01/12 08:21:33 WARN security.UserGroupInformation: PriviledgedActionException as:cloudera (auth:KERBEROS) cause:java.io.IOException: javax.security.sasl.SaslException: GSS initiate failed [Caused by GSSException: No valid credentials provided (Mechanism level: Failed to find any Kerberos tgt)] ls: Failed on local exception: java.io.IOException: javax.security.sasl.SaslException: GSS initiate failed [Caused by GSSException: No valid credentials provided (Mechanism level: Failed to find any Kerberos tgt)]; Host Details : local host is: "quickstart.cloudera/127.0.0.1"; destination host is: "quickstart.cloudera":8020; [cloudera@quickstart ~]$ hadoop fs -put /etc/hosts myhosts 15/01/12 08:21:47 WARN security.UserGroupInformation: PriviledgedActionException as:cloudera (auth:KERBEROS) cause:javax.security.sasl.SaslException: GSS initiate failed [Caused by GSSException: No valid credentials provided (Mechanism level: Failed to find any Kerberos tgt)] 15/01/12 08:21:47 WARN ipc.Client: Exception encountered while connecting to the server : javax.security.sasl.SaslException: GSS initiate failed [Caused by GSSException: No valid credentials provided (Mechanism level: Failed to find any Kerberos tgt)] 15/01/12 08:21:47 WARN security.UserGroupInformation: PriviledgedActionException as:cloudera (auth:KERBEROS) cause:java.io.IOException: javax.security.sasl.SaslException: GSS initiate failed [Caused by GSSException: No valid credentials provided (Mechanism level: Failed to find any Kerberos tgt)] put: Failed on local exception: java.io.IOException: javax.security.sasl.SaslException: GSS initiate failed [Caused by GSSException: No valid credentials provided (Mechanism level: Failed to find any Kerberos tgt)]; Host Details : local host is: "quickstart.cloudera/127.0.0.1"; destination host is: "quickstart.cloudera":8020;

Congratulations, you have a running Kerberos cluster!

Marty Lurie is a Systems Engineer at Cloudera.

Do you have something similar for Impala too?