This blog post was published on Hortonworks.com before the merger with Cloudera. Some links, resources, or references may no longer be accurate.

If data is the new bacon, data stewardship supplies its nutrition label!

This is the first part of a two-part blog introducing Data Steward Studio (DSS) and discusses the problems that DSS addresses in the enterprise data landscape. Part 2 of this blog will cover a detailed capability walkthrough.

Data lakes, which promise to provide business agility coupled with rapid time to insights, have become the backbone of a scalable modern data architectures across enterprises today. With the ability to store all types of data for longer periods of time and immense flexibility to query and access data using multiple methods, data lakes aim to provide an elastic converged platform with data management services focused on business use cases that can deliver financial value through relevant insights. However, the adoption of data lakes across enterprises is plagued with anti-patterns such as:

- “geo-sprawls” of siloed data ponds with unnecessary duplication of unorganized data across business units, locales, on-premise and cloud

- “ungoverned swamps” that no one really knows the purpose for, filled with data of questionable quality and trustworthiness, or

- “over-governed fiefdoms” which impose stifling restrictions on who can view, access and work on the data, that no one ends up being able to get any value from the data lake investment.

With the convergence of cloud, IoT, and big data technologies, data lakes provide the critical fuel for enterprise-wide digital transformations. As businesses are required to store their data simultaneously in multiple data lakes or ponds separated across many geographies (for example, due to regulatory and compliance mandates that limit cross-border data transfer) and across multiple cloud platforms, the importance of effective centralized data management of such hybrid environments becomes paramount. Such data management needs a nuanced balance of governance and democratization of data. Providing adequate stewardship with the right set of rules and policies around data security and privacy as well as rational policy enforcement across the information supply chain is critical to adoption and value creation. Enterprises now require a “global insight fabric” that can find a happy medium between adequate rules and policies of data governance while providing a trusted environment for users to collaborate and share data responsibly to create value from such modern data architectures.

DISCOVER with Data Steward Studio (DSS)

At Hortonworks, our strong belief is that the journey to value creation with data lakes is at an inflection point: a global insight fabric that provides a common way to manage, secure, govern this rapidly evolving, dynamic, multi-location, virtualized, multi-cloud hybrid environment is critical for collaborative value creation in most enterprises. Our approach is to provide robust capabilities via the Hortonworks DataPlane Service (DPS), an enterprise-grade global insight fabric, which focuses on enabling customers to accelerate capturing of insights, to distill knowledge from the underlying data via collaboration faster, and to realize an adequate return on their data lake investments quickly. In order to comprehensively address such challenges with understanding your hybrid and multi-cloud data lake environments, in April, we unveiled Data Steward Studio (DSS) at DataWorks Summit in Berlin. DSS is the second service to be generally available on the DPS platform. The launch of DSS is a recognition of many key challenges faced by enterprises today:

- the proliferation of data types and sources,

- the expensive and time-consuming process of discovery, organization, and curation of data,

- the need to gain global visibility of business context, usage, and trustworthiness of data

- The need to have centralized data and metadata security controls and access monitoring

All of these challenges create a significant chasm between initial data capture and subsequent data insights to drive value creation. DSS, as an enabler of this global insight fabric, is designed to address this gap.

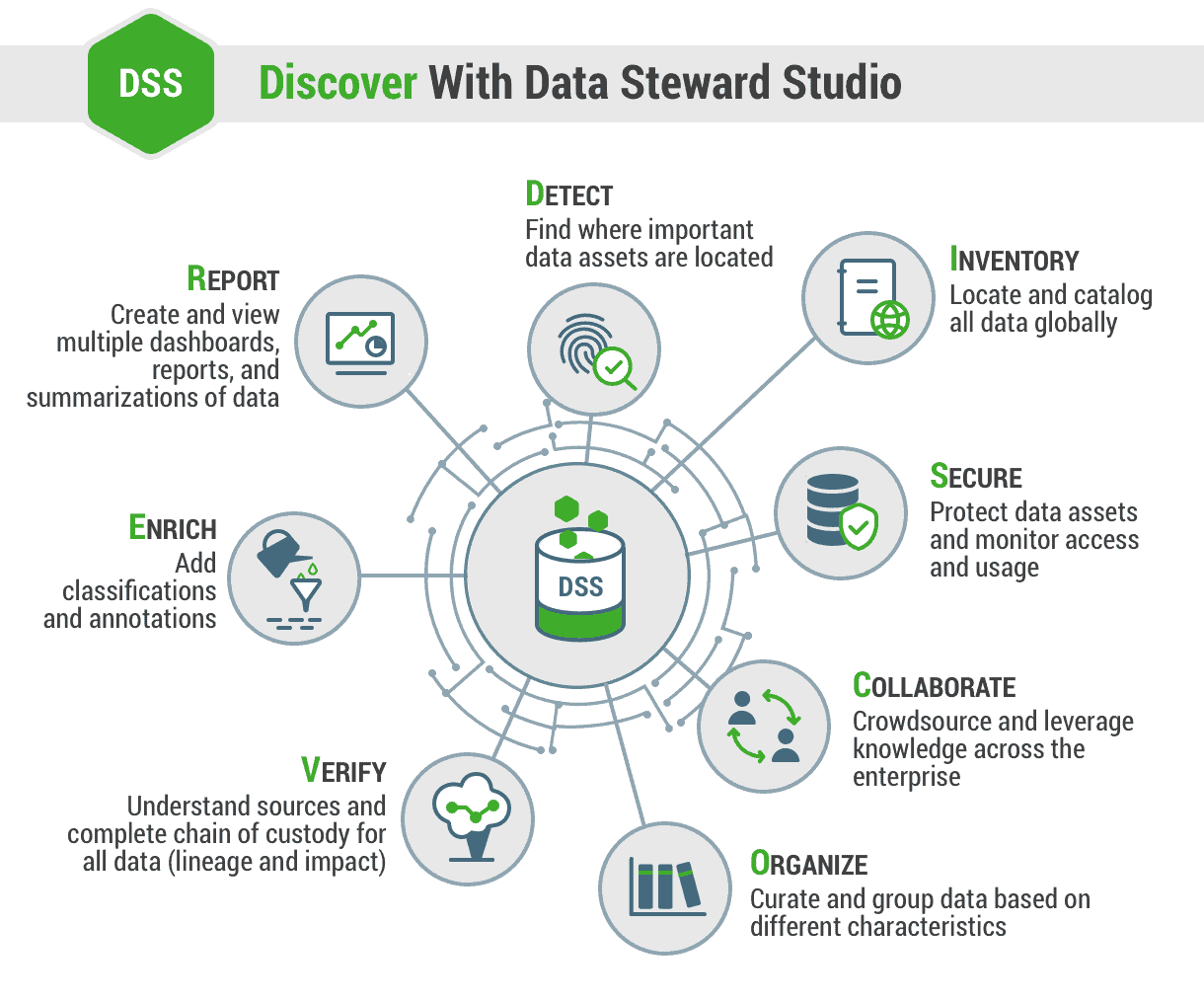

With DSS, businesses will be able to understand their data in their hybrid data lake environments from a 360-degree perspective with our robust ‘DISCOVER’ approach:

- Detect: Find where important data assets are located

- Inventory: Locate and catalog all data globally

- Secure: Protect data assets and monitor their access and usage

- Collaborate: Crowdsource and leverage knowledge across the enterprise

- Organize: Curate and group data based on different characteristics

- Verify: Understand sources and complete chain of custody for all data (lineage and impact)

- Enrich: Add classifications and annotations

- Report: Create and view multiple dashboards, reports, and summarizations of data

DSS empowers enterprises to precisely identify and evaluate trust levels of their data, to securely collaborate, and to confidently democratize data across the enterprise in order to derive value from the data in their data lakes – whether these data lakes are located in on-premise data centers or in the cloud or across multiple cloud provider environments.

DSS enables various personas across enterprises, such as information stewards, data scientists, business analysts, and data engineers, with robust capabilities to find, curate, collaborate, secure, and report on data and its context through an intuitive user experience. The context can include key characteristics of the data such as its structure, metadata, classifications, lineage, sources, governance and security rules and policies, and usage audits across data lakes.

With DSS, data stewards, engineers, and analysts are easily able to:

- Organize and Curate Data Globally: Data professionals are able to organize and characterize data based on a variety of criteria, including business classifications, purpose, protections needed and more. These additional levels of structure and coordination will promote responsible collaboration across enterprise data workers to ensure the veracity of data remains intact.

- Discover, Catalog and Search: With DSS, personal or sensitive data can be easily discovered and tagged so that it can be classified and searched by data consumers such as business analysts and data scientists. With a focus on automation, DSS includes a flexible data profiler framework that can run a variety of data profilers as a pipeline of operations on data located across multiple data lakes.

- Asset Collection: To ease management and administration of data assets, DSS enables data consumers stewards and consumers to create Asset Collections which represent a curated list of assets that have been grouped based on multiple characteristics such as their origin, value, protection level, sensitivity, or functional use. With Asset Collections, data consumers can organize, search, summarize and understand data enabling secure collaboration, identification of anomalies in access patterns and forensic audit/compliance controls.

- Data Lineage & Impact: The ability to determine the custody of data has been a critical aspect of data governance. With the arrival of the General Data Protection Regulation (GDPR), a comprehensive view of data lineage has perhaps never been more important. DSS also empower data professionals to:

- Track and understand how data is created and modified

- Visualize chain of custody of the data from source to final destination across data lakes providing both upstream lineage and downstream impact for assets

- Understand how structure or schema or data evolve

- View and understand data supply chain (pipelines and evolution)

- Secure Data and Metadata: To facilitate data security and governance, DSS will empower data professionals with a better understanding of enterprise-wide authorization landscape: who can access which data and metadata based on physical and logical metadata as well as business classifications and specifically under what conditions. DSS also enables a single view of security policies, data protection status, and any anonymization/pseudonymization rules that have been defined. In addition, users will be able to:

- View who has accessed what data from a forensic audit/compliance perspective

- Visualize access patterns and identify anomalies

- View context and structure descriptions: Schema, classifications (business cataloging), encodings

- Understand statistical properties, models, and parameters

- View user annotations, wrangling scripts, view definitions etc.

In summary, DSS enables enterprises to contextualize knowledge about data located across hybrid data lake platforms which in turn allows them to take meaningful actions or generate actionable insights about their business operations, reducing the lag between insight discovery and value creation.

See DSS in action from the keynote demo in DataWorks Summit, Berlin and conference breakout session on Security & Governance