You may have heard of the recent (and ongoing) hacks targeting open source database solutions like MongoDB and Apache Hadoop. From what we know, an unknown number of hackers scanned for internet-accessible installations that had been set up using the default, non-secure configuration. Finding the exposure, these hackers then accessed the systems and in some cases deleted the files or held them for ransom.

These attacks were not technologically sophisticated, and they could have been easily avoided.

We suspect that many Hadoop sites that fell victim to this hack were set up by users who were unaware of their own exposure, and we’d like to make sure that Cloudera customers can protect themselves. This blog post aims to help you do just that.

Security is a complex topic and we cannot go into a complete treatment of that topic here. Our goal is to ensure that only people authorized to access Cloudera installations can do so. We will then recommend additional steps you can take in your journey to secure your deployment. Since the information in this blog is generic (i.e., intended for all users) and provides only basic steps that should be employed to improve your Hadoop security, we urge all Cloudera customers to contact us directly for a complete and robust security review and configuration. Hackers are always trying to stay one step ahead, and our security professionals can help you achieve your security goals.

Network Access Control

To begin with, we want to make sure that your Cloudera installation is only reachable from locations it is intended to be reachable from. Clearly, for example, your researchers in the next building, or your data processing pipeline in your data center, need to reach your Cloudera cluster.However, a sound security protocol would mandate that only a few — if any — databases need to be directly accessible from the open internet, or even from most of your corporate network. We therefore advise you think about your data pipeline and cluster management policies to figure out from where (i.e. which network subnets) do you or your organization need access to the cluster.

Then, apart from testing that your cluster is accessible only where needed, we advise you to use the following to ensure that the cluster is NOT accessible from other places, particularly the open internet.

There are many ways to test reachability (or unreachability) of your cluster. In the following example, we will use nmap. Follow these steps:

- Find out the IP address(es) your cluster is reachable from. When you ssh into your cluster machines, or connect to Cloudera Manager, or to Hue etc., you will be using a hostname or IP address. If you are using a hostname, find the IP address it resolves to (again, several tools exist for this, dig or host will do the job nicely). If your IP address is a private network address (e.g. one that begins with 10., 192.168., or 172.16. through 172.31.), then your cluster may not be reachable from outside anyway — you will have to talk to your network administrator to be sure. If it is not a private address, continue on to the next step.

- Armed with these IP addresses, access the internet from outside of your corporate network (say, a library, or your home, without using VPN). Now try to access your cluster machines, as follows (say the IP address for one of your machines is 203.0.113.11):

nmap -Pn -p 1004-60030 203.0.113.11

- You want the result to say that the hosts or the ports were not accessible. If any of the hosts or ports were accessible, then you have a problem that needs to be fixed.

Here is what nmap output looks like when traffic from the internet to the hosts or ports in question is blocked:

Starting Nmap 7.12 ( https://nmap.org ) at 2017-01-20 12:00 EST Nmap scan report for 203.0.113.11 Host is up (0.015s latency). All 59027 scanned ports on 203.0.113.11 are filtered Nmap done: 1 IP address (1 host up) scanned in 214.18 seconds

Here is what the output will look like if you do have some ports on your host that are accessible.

Starting Nmap 7.12 ( https://nmap.org ) at 2017-01-20 12:00 EST Nmap scan report for (203.0.113.11) Host is up (0.083s latency). Not shown: 58915 closed ports PORT STATE SERVICE 2049/tcp open nfs 2181/tcp open unknown 3306/tcp open mysql 4242/tcp open vrml-multi-use ... Nmap done: 1 IP address (1 host up) scanned in 195.38 seconds

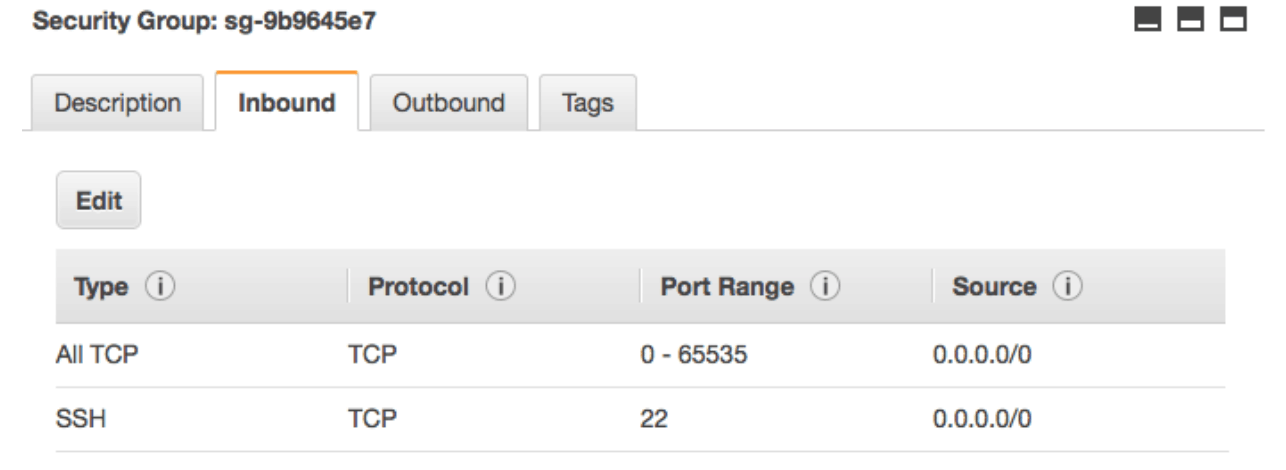

If you use a cloud provider, you might be able to figure out if your cluster is open to the internet by looking at the networking rules. For instance, Amazon Web Services uses security groups to decide who can reach your cluster and who can not. Here is an example of a group that allows anyone to connect:

The problem is that the first rule, “All TCP”, allows anyone to connect to any port on the cluster machines (that’s what the 0.0.0.0/0 means).

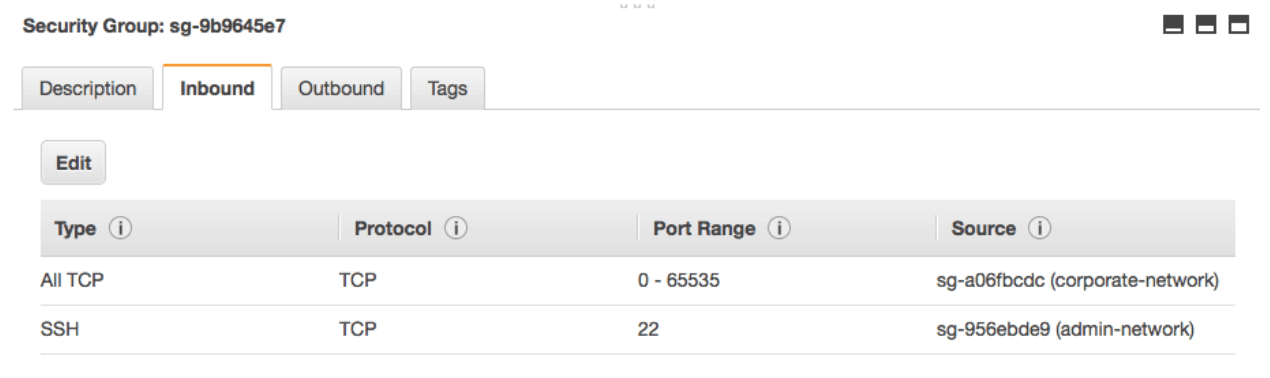

Here is an example where the above security group has been fixed:

As you can see, SSH access has been restricted to only the admin network (meaning that you have to already be on the admin network in order to be able to ssh into the cluster machines). All other ports are open to anyone in the corporate network.

If you find that you do have ports accessible from locations that should not have access to cluster services, you will need to either shut down services running on these ports (if these services are indeed not needed), or block access from these locations. You may need to consult with a network administrator on advice on how to shut off access correctly. Some useful tools that may be of interest to you if you need to do this:

- Host firewalls like firewalld or iptables, while ordinarily a good solution for blocking unwanted traffic do not work well with Cloudera software. Please read on for alternatives.

- Network firewalls, depending on your networking infrastructure. Your networking administrator should be able to help you with these.

- If you are using a cloud hosting provider, you will need to consult your provider’s documentation. E.g. For AWS, you should look into security groups (Cloudera has some guidance if you are using Director). For Azure, network security groups. Google Compute Engine will also let you configure network firewalls.

I couldn’t find any ports accessible, am I safe?

If you ran the above example, and did find ports accessible, clearly you have a problem that you need to fix. What if you did not find any ports accessible? Does that mean your cluster in not accessible by curious or malicious actors on the internet?

The short answer to that is that you can’t be totally sure that your cluster is not accessible. For instance, maybe your network has port forwarding or selective source filtering or one of a variety of mechanism by which your particular nmap invocation got blocked, but an alternate invocation, or a test from a different source could still work.

The good news is that such configurations are hard to get to by accident or negligence, which is what we think happened in the hacked installations. They will require explicit configuration of your corporate network to allow such access. Alternatively, some other assets inside your corporate network (the part of the network you may typically assume to be safe from the bad guys) might have been compromised and could be serving as launching pads for attacks on your cluster.

Good security systems follow the principle of defense in depth, which tells us that we need several “layers” of security. We cannot just rely on firewalls to provide security since they can be bypassed. Cloudera provides several other measures you should adopt to thwart the hackers who do manage to find your cluster despite your firewalls and other network security.

Further Work

In the above example, we only showed you how to check if your cluster was accessible from the internet at large. While that is a good start, in reality, most of your corporate network itself will not need to access your cluster. Following the principle of least privilege, you ought to restrict access from such areas of the corporate network as well. You can follow the same principles outlined above to achieve this.

Going a step further, not everyone who needs access to some cluster services needs to be able to access all services, in particular, management services for the cluster. For instance, the ingest tools do not need access to Cloudera Manager or Hue. Thus, you can and should further limit access by services.

A detailed treatment for both of these topics is beyond the scope of this blog. Please look at the references below for further reading and for pointers.

Default Passwords

Another key factor in the hacks was that many of the instances were using their default settings. In most software the default settings are meant for ease-of-use for demo and evaluation of the software — it is assumed that folks using the software in production will modify the setup to make it secure. One area where this becomes particularly important is passwords.

It is very important to not use default passwords for anything but initial login. When setting up a new Cloudera cluster, we recommend that you change passwords from the default to something secure as soon as possible; if possible remove the default admin account. Instructions for Cloudera Manager are available here.

If you have already running clusters for which you are using default passwords, follow the settings to change the password right away. E.g. Cloudera Manager will allow you to change passwords from the admin console.

Are we there yet?

No. The above steps are only the most basic security measures that you should take. Once you have taken the above steps, we suggest that you look into the following:

- Wire encryption with TLS

- Authentication with Kerberos

- Authorization using Sentry and ACLs

- At-rest encryption, using HDFS transparent encryption and Cloudera Navigator encrypt

- Cloudera Navigator auditing

Moreover, just like the drive to your grandma’s house when you were a kid, security for any real-world system is a never-ending journey. As the usage of system evolves, and as we issue advisories for new issues we find, you will constantly find yourself having to adjust your security settings.

We recommend that you learn about general Hadoop security concepts using the resources in the following section. Follow Cloudera’s security bulletins to keep up-to-date with the latest news, join our community and keep an eye on our blogs.

For further information

Cloudera issued an advisory on the ongoing hacks.

Cloudera documentation has a section on security.

Hadoop Security is a great resource if you prefer to have a book to learn from.

Cloudera has been running (and will continue to run) security training sessions at the Strata Hadoop World conferences. Come join us on March 14 in San Jose to learn more.