[Editor’s note: Now that the recent merger is complete, the Cloudera Engineering blog will expand to cover products, such as this, originally developed for the Hortonworks platform. Please stay tuned for future product announcements regarding availability of these products on the Cloudera platform.]

Since the release of Streams Messaging Manager (SMM) at the end of last summer, our customers have started to cure the Kafka Blindness within their organizations by using SMM to monitor their Kafka clusters and streaming microservices applications. With the release of SMM 1.2, we have delivered on the top three most requested features in SMM:

- Topic Lifecycle Management

- Alerting

- Schema Registry Integration

The full list of features can be found in SMM 1.2 docs.

Topic Lifecycle Management Integrated with Ranger

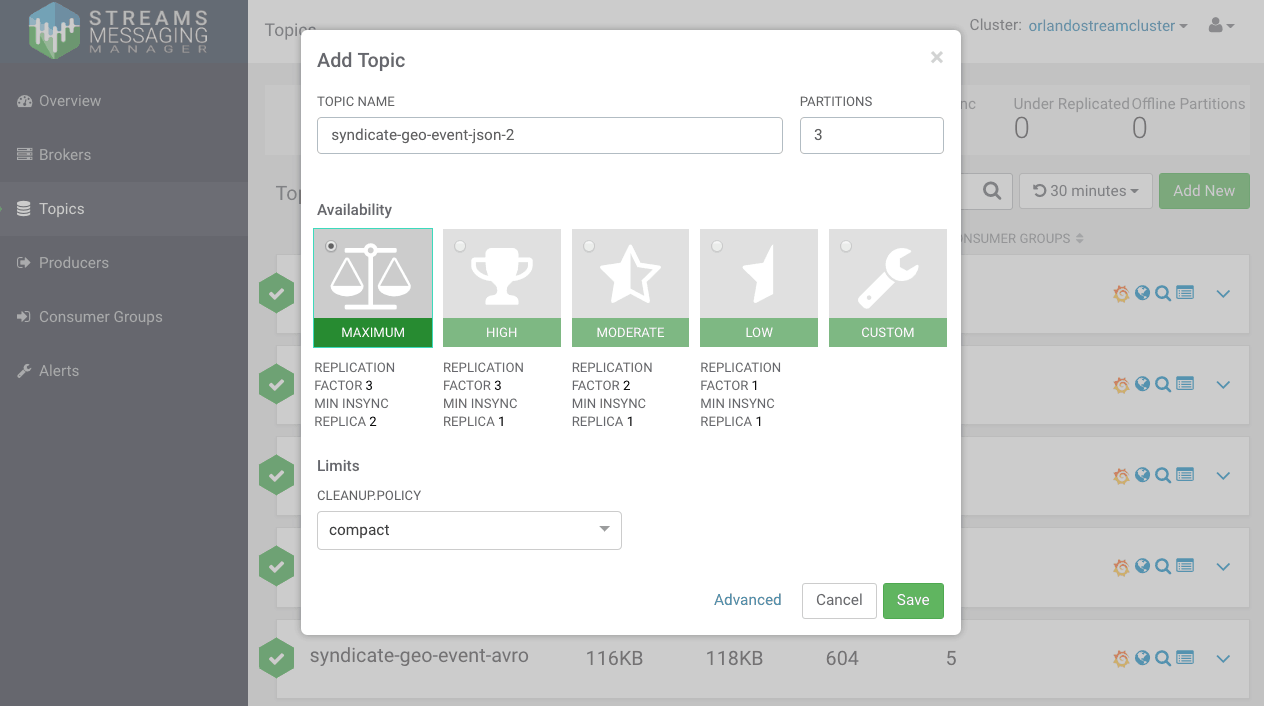

With SMM 1.2, users now have the ability to create, update and delete topics within SMM. These actions can be performed within the SMM DataPlane UI or via SMM REST services. The below shows the topic creation wizard. The wizard provides flexibility to create topics as a function of availability characteristics (replication factor, min insync replica, etc) or with full customizability.

These operations are fully integrated with Kafka Ranger policies such that only authorized users can perform these topic lifecycle management actions.

Alerting

Platform Operations and DevOps teams need the ability to create alerts to manage the SLA of their applications. SMM 1.2 now provides rich alert management features for the critical components of a Kafka cluster including brokers, topics, consumers and producers.

The key constructs for alerting in SMM are:

- Alert Notifier – An alert notifier tells SMM what to do when a configured alert is triggered. Out of the box supported notifiers include sending alerts to a configured email inbox, an HTTP endpoint or a Kafka topic to integrate alers with other systems in the enterprise (e.g: ticketing/case creation systems). The user is also able to configure customer alert notifiers.

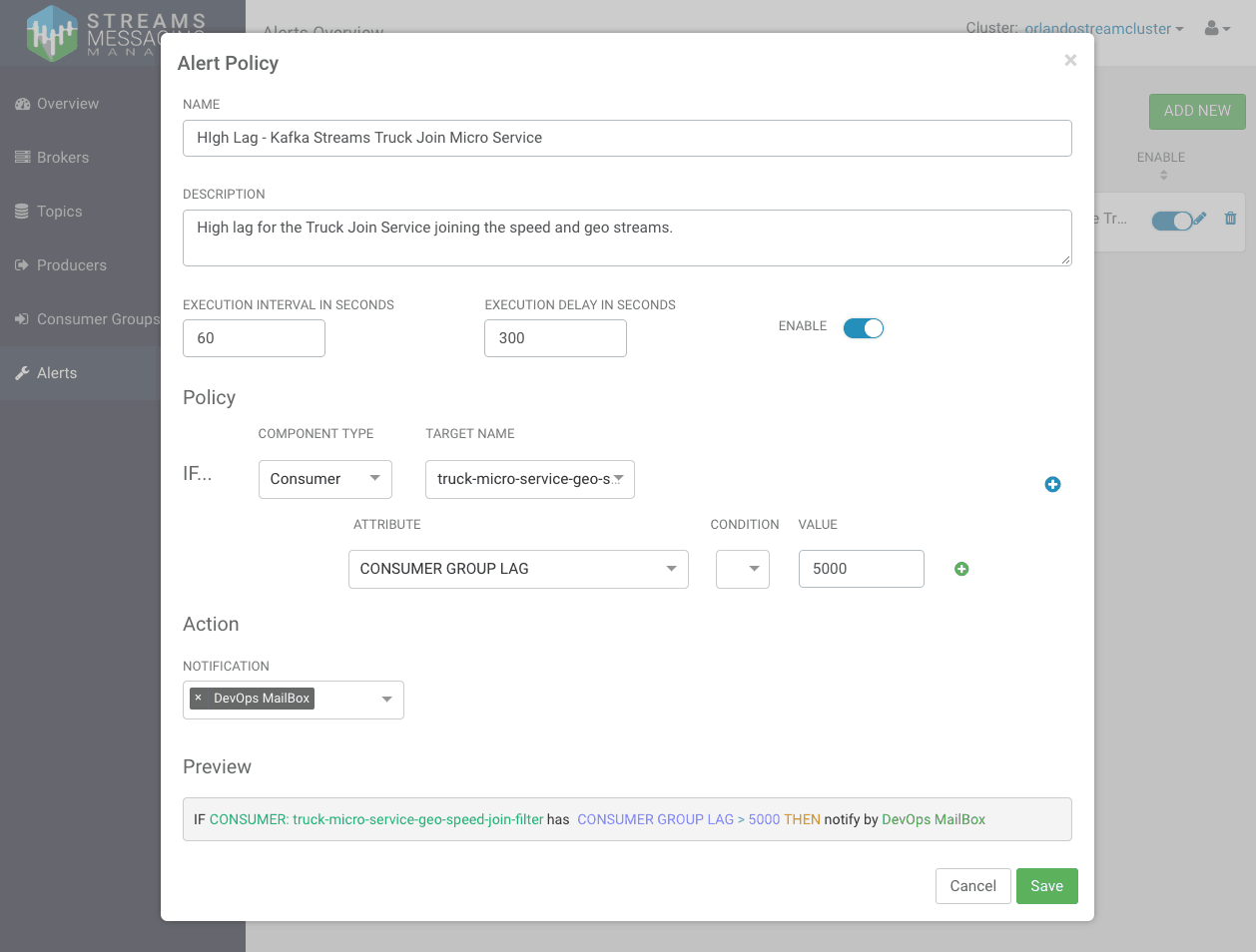

- Alert Policy – The policy is the definition for a given alert. An alert can be defined for the five key entities in Kafka: cluster, broker, topic, producer and consumer. For each of these entities, a set of metrics can be selected to define the alert. Different metrics across different entities can be composed together with conditional operators to create more complex alerts. The alert policy is also configured with the notifier when the alert fires.

The following shows an example of an SMM alert policy that triggers an alert if a given consumer group lag exceeds a threshold.

Use Case Example: Alerting on MicroService Consumer Group With High Lag

In the previous SMM blog Monitoring Kafka Streams Microservices with Hortonworks Streams Messaging Manager (SMM), we discussed how to use SMM to monitor microservices built using Kafka Streams.

The video showcases how Streams Messaging Manager (SMM) is used to create a Kafka alert policy for a consumer group that has frequently displayed high lag behavior. With the alert in place, the lag is detected earlier and fixed before it affects downstream systems.

Integration with Schema Registry

Schema Registry (SR) allows the user to define schemas for a given Kafka topic and provides the following key benefits:

- Data Governance

- Provide reusable schema (centralized registry)

- Define relationship between schemas (version management)

- Enable generic format conversion, and generic routing (schema validation)

- Operational Efficiency

- To avoid attaching schema to every piece of data (centralized registry

- Consumers and producers can evolve at different rates (version management)

- Data quality (schema validation)

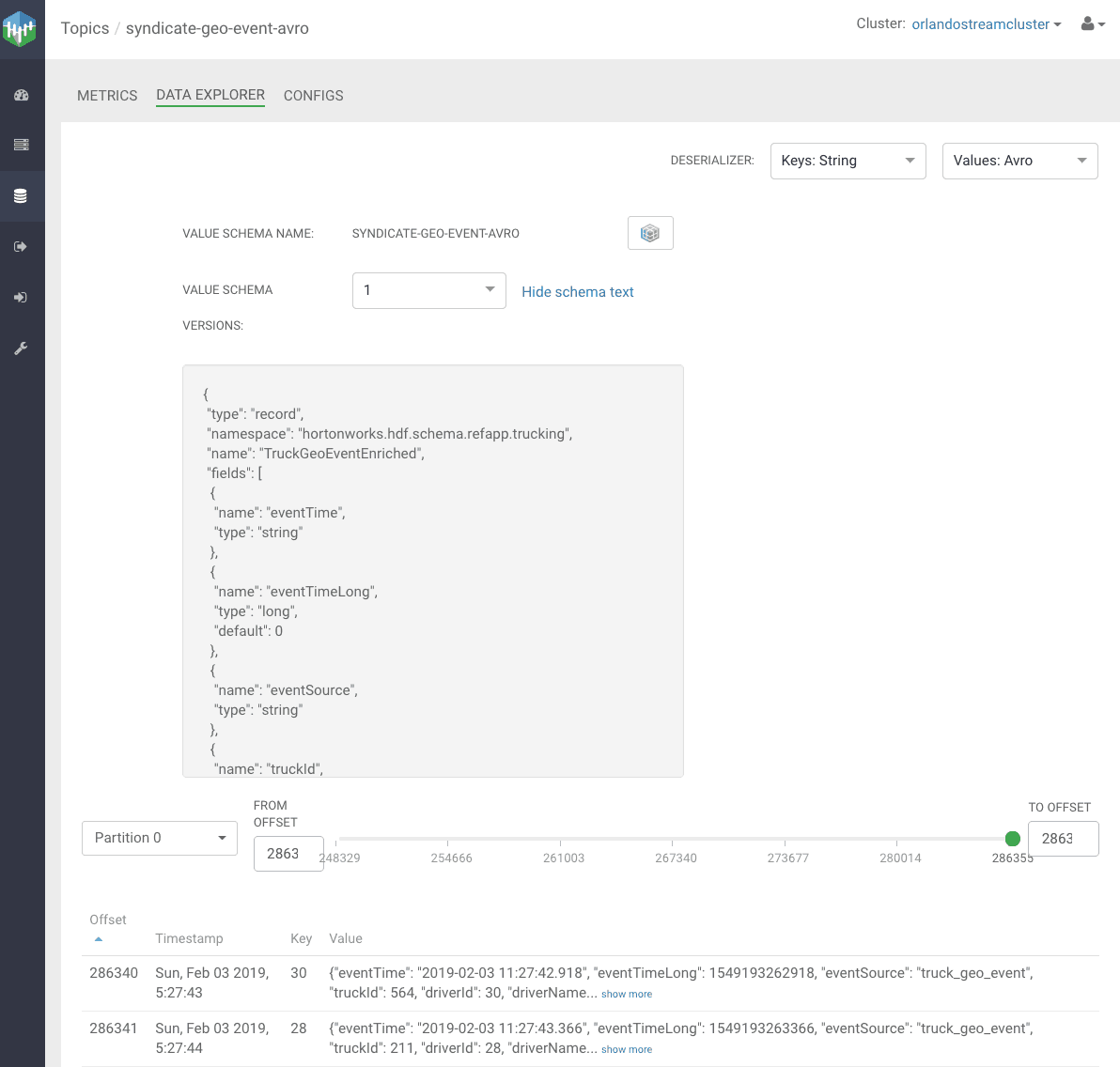

As the below diagram illustrates, SR has been integrated with SMM 1.2 by providing the ability to view, create and modify the schema associated with a given kafka topic.

What’s Next?

With topic lifecycle management, alerting and integration with Schema Registry, powerful new features have been delivered in SMM 1.2 to help our customers fight the Kafka Blindness. There is a lot more innovation coming as the team is working hard on new capabilities to manage and monitor Kafka replication across multiple data centers. Stay Tuned!

The website is awesome in one word. It provides many good quality SMM panelservices and the site is very reliable and trustworthy.