A tale of two organizations

Here at Cloudera, we’ve seen many large organizations struggle to meet ever-changing and ever-growing business demands. We see it everywhere. Traditional on-premise architectures, which create a fixed, finite set of resources, forces every business request for new insight to be a crazy resource balancing act, coupled with long wait times, or a straight-up no, it cannot be done. Cloud has given us hope, with public clouds at our disposal we now have virtually infinite resources, but they come at a different cost – using the cloud means we may be creating yet another series of silos, which also creates unmeasurable new risks in security and traceability of our data. What we need is an easy way to get to the cloud, and use it only when we need it, and not run up the bill when we don’t.

For example, one large telco we work with consistently bemoans the fact that no matter how they pad their annual budgets–even adding 50%–there’s never enough to cover the business demands they face and the surplus they add is quickly consumed even before hitting the half-way mark. Although they need tremendous compute power, they also need to control their costs. This causes resource restrictions on various groups that use the platform. When departments are not well served by central IT, “shadow IT” emerges. These independent departmental IT projects threaten internal network security because central IT cannot control them–most of the time central IT is not even aware of them. In addition, costs generated by independent IT projects frequently skyrockets with few controls in place to manage them. The central IT department at this telco is bombarded from multiple directions and never can make their IT budget stretch to meet demand. Pressure is not only felt by central IT, but also by business users within the organization. The business users are frustrated with the lack of service provided by the CIO’s organization. After all, every new request for IT resources or infrastructure seems to take 1-3 months–under these circumstances, their shadow IT projects seem well justified!

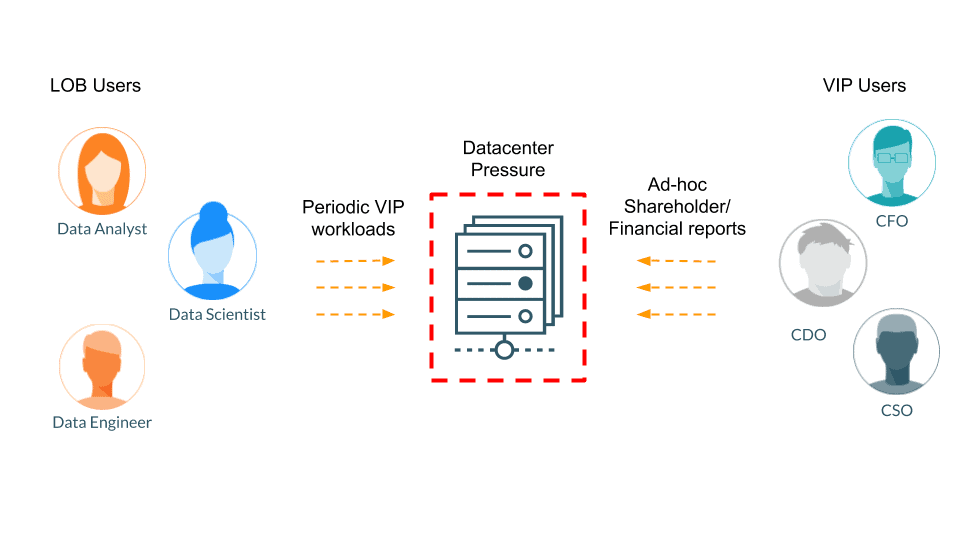

As a contrasting example, we also work with a large multinational bank whose CIO is faced with trying to protect certain VIP workloads. These VIP workloads must receive the highest priority while central IT continues to serve routine organizational demands, adheres to strict SLAs, and operates within their budgets. There are two types of VIP workloads: perpetual workloads run constantly and are mission-critical to the various lines of business, especially the ones dealing in risk analytics, platform support, or data engineering, which hydrate critical data pipelines for the lines of business (LoBs); periodic workloads run on a recurring schedule, like the beginning or end of each quarter. At these times, they run business growth reports, shareholder reports, and financial reports for their earnings calls, to name a few examples. These periodic workloads pose a unique challenge for the CIO because they need the flexibility of easily turning off the VIP status of these workloads or users when their status is not high priority. Having this flexibility is crucial to operating within their budget. Overall, their limited infrastructure means all LoBs are competing for resources while the VIP workloads must maintain top priority and CIOs must operate within their allocated budgets.

Typical scenarios for most customer data centers

Most of our customers’ data centers struggle to keep up with their dynamic, ever-increasing business demands. The two examples listed here represent a quick glance at the challenges customers face due to the peak demands and extreme pressure on their data centers. The slow and rigid nature of hardware procurement makes it impossible to meet the dynamic and intermittent demands of business. “Over-sizing” helps during times of peak demand but justifying the ROI for such over-provisioning is next to impossible. Moving to a cloud-only based model allows for flexible provisioning, but the costs accrued for that strategy rapidly negate the advantage of flexibility.

A solution

At Cloudera, we listened to our customers’ problems and built the Burst to Cloud feature in Workload Manager (WXM), Cloudera’s intelligent workload management tool. Burst to Cloud not only relieves pressure on your data center, but it also protects your VIP applications and users by giving them optimal performance without breaking your bank.

Burst to Cloud enables you to move select workloads to the cloud and gives you the flexibility to make sharp pivots in how you manage and deploy your IT workloads. Your sunk costs are minimal and if a workload or project you are supporting becomes irrelevant, you can quickly spin down your cloud data warehouses and not be “stuck” with unused infrastructure. Cloud deployments for suitable workloads gives you the agility to keep pace with rapidly changing business and data needs.

The Burst to Cloud option might sound obvious or even trivial. However, when you consider the overhead of analyzing and identifying workloads for cloud-readiness and then actually moving them securely to the cloud, you quickly realize that simply “bursting to the cloud” is anything but trivial. You are probably hesitant. Today, it is nearly impossible for IT departments to know if a particular workload is optimal to move from on-premises to the cloud. In addition, estimating the capacity that needs to be provisioned for the identified workload can be equally challenging and time-consuming. Typically, when we talk about data warehousing at an enterprise level on the cloud, one of the biggest concerns is that moving workloads from on-premises to the cloud is not seamless and opens up new risks for data safety and security. Key areas of concern are:

- Compliance Risks – This ranges from the wrong people having access to the data, to not fully knowing the proper origins of the data.

- Security Risks – On the cloud, you are faced with a different security paradigm as compared to on-premises deployments. Cloud deployments add tremendous overhead because you must reimplement security measures and then manage, audit, and control them.

- Inability to maintain context – This is the worst of them all because every time a data set or workload is re-used, you must recreate its context including security, metadata, and governance.

However, Cloudera’s Burst to Cloud feature takes care of these concerns for you automatically. You need not spend weeks analyzing and identifying which workloads to move. You simply take the recommendations from WXM and securely burst your workloads to the cloud. Move your workloads as either a long-term or temporary scale-out option to protect your VIP applications and more importantly, relieve the pressure off your data center.

How Burst to Cloud works

Before you get started, use WXM to perform workload analysis and create workload views that help you categorize workloads around specific users and applications. After you have created these workload views, then you can initiate the Burst to Cloud functionality.

The Burst to Cloud functionality comprises three key elements: First, it helps to identify the cloud readiness of a workload. Next, it generates a replication plan based on deeper analysis of the identified workload, and a tailor-made capacity plan that ensures optimal running of the workload. Last, move the workload to the cloud. Let’s take a deeper look at these elements.

Automatic workload identification

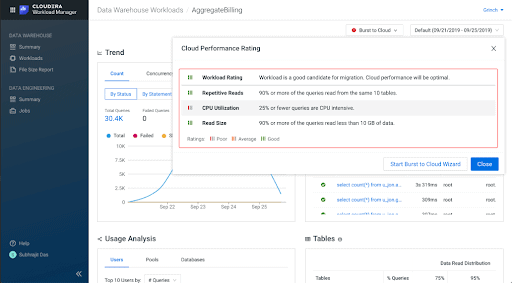

Burst to Cloud begins with automatic workload identification, which saves you weeks you might otherwise spend analyzing workloads for their cloud-readiness. This addresses both scenarios described above because both scenarios depict IT departments struggling to meet demand with available resources. The last thing you need is another 1-3 month analysis project. Burst to Cloud uses an intelligent scoring mechanism to automatically identify “cloud-ready” workloads for you. This intelligent scoring mechanism considers factors like CPU utilization, I/O or network utilization, data size, data read/data access frequency, and job or query categorization. Without Burst to Cloud’s unique functionality, the large number of factors that must be considered when identifying cloud-ready workloads makes it nearly impossible for you to know if a particular workload is a good candidate.

The following image shows how WXM rates a workload on cloud performance:

Because automatically identifying cloud-ready workloads can be accomplished with the click of a button, you can quickly deliver requested infrastructure and resources to your business users. When their needs are met quickly, they are discouraged from resorting to shadow IT projects. If a workload has a low cloud-readiness score, this feature also provides recommendations to improve workload performance on your cluster.

Automatic creation of capacity plans and replication policies

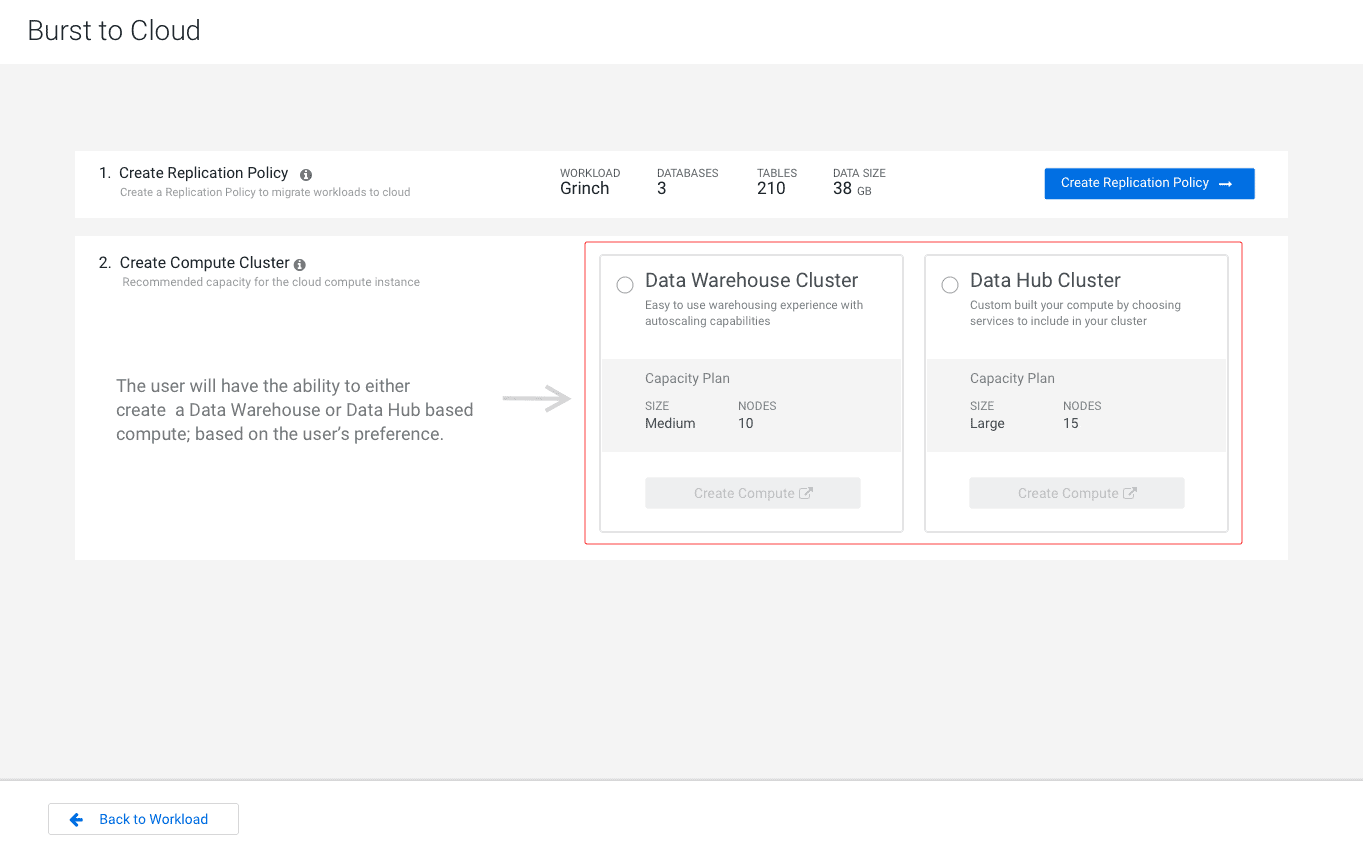

After WXM identifies cloud-ready workloads, it automatically generates a custom capacity plan and a replication policy with your input:

Even before creating a replication policy you get high-level insight into how many databases and tables are accessed by this particular workload and its total data size. This step gives the IT or platform administrator at the telco or the bank a feel for how heavy or light the replication is going to be before getting too deep into the process. The replication policy outlines how the workload data is moved from the on-premises cluster to the Database Catalog in CDP Data Warehouse.

The Create Compute Cluster section provides the capacity plan which identifies the cloud resources needed to optimally run the cloud-ready workload on a Virtual Warehouse in CDP Data Warehouse service. You have the ability to either create a Data Warehouse or Data Hub based-compute based on your preferences. The ability to choose which service (Data Warehouse or Data Hub) this workload should be burst into is a game changer–no one else offers this flexibility.

The Create Replication Policy takes you to the replication policy wizard that walks you through the specifics of creating a replication policy, including the source of the identified VIP workload and the target destination in CDP cloud. This provides the administrator with the flexibility of establishing best practices for bursting specific types of workloads to specific destinations. These best practices can be based on the priority of the workload, or the LoB that owns it, or even the type of workload itself. For example, the bank from our example might have separate destination data lakes for their perpetual and periodic workloads to support addressing these VIP workloads separately. The following image steps you through replication policy creation:

After you have selected the source and the destination for the workload you can schedule this replication either as a one-time or repeated run. Returning to the telco from earlier, they might schedule multiple bursts as one time runs to ease off the immediate pressure on their systems. This flexibility allows central IT to support their business needs better while staying within their budget and keeping shadow IT projects in check. At the bank, they can schedule their VIPs, both perpetual and periodic, on different replication schedules. This enables them to cater to the needs of those specific LoBs, ensuring they are successful. A unique feature of replication policy creation with Burst to Cloud is that you can include your existing security permissions and they are translated to the cloud automatically.

Once replication is complete, you can log into your chosen service in CDP to access your data and run your workloads. Alternatively, you can also spin up a different compute cluster and access the data by using CDP’s Shared Data Experience. This feature ensures workloads remain in context with all common data, including metadata management, data governance, and security policies.

How Burst to Cloud can solve your data center pressure

So is bursting to cloud with CDP the right option for you? More than likely it is. Let’s take a look at another customer scenario to provide some additional perspective.

Another tense scenario at an international pharma

An international pharma manages an infrastructure that supports about 1,500+ users across 1,400 hosts with 40+ petabytes of data handled across their clusters. One cluster contains about 800 nodes. For their central IT organization, simply monitoring, let alone effectively managing, their systems seems like an impossible task.

This pharma has multiple critical LoB users who need to analyze terabytes worth of data to predict trends and perform complex pattern matching which makes medicinal drug breakthroughs possible. For example, they actively work on discovering better drug options and reducing side effects on patients who use their drugs. These are VIP users who perpetually need data center resources so they can perform mission-critical work for the organization on a routine basis. They also have another set of VIP users who are part of the CFO group. These users periodically run business growth reports, revenue forecasts, and financial reports for their quarterly earnings calls. These two groups of users performing mission-critical work are at odds with each other. When the users working on reports for earnings calls must run their reports, they consume resources that the LoB users need to run their predictive analyses. Although the earnings call reports only run on a quarterly basis, when they are running, their workloads can stop everyone else from using the data warehouse–an impossible situation. With such a large and complex landscape they always run the risk that someone somewhere in the organization might run an ad hoc workload on their system that affects the performance of these VIP workloads. There is even a credible risk that these VIP workloads might fail due to resource contention or even worse because the workflow or data pipeline breaks down. Due to the sensitivity of the analytics and reports being generated, when they run is as crucial as the time it takes them to run to meet their SLAs. Today, this organization suffers from irregular execution times, report failures due to out-of-memory (OOM) errors, and long query response times. With their current toolset, it is impossible for them to preemptively stop these issues from happening so they can ensure their VIP users and workloads are protected. Their data center is continuously under extreme pressure:

The Burst to Cloud feature in CDP gives this pharma the ability to protect their VIP workloads without stopping everyone else from using the data center. Their business needs are supported and their most mission-critical SLAs can be met with confidence. First, they use WXM to create a custom workload view for each critical LoB user who can be tagged as a perpetual VIP (VIP workloads that run routinely). Then they can create workload views for the earnings report power users who can be tagged as periodic VIPs (VIP workloads that run at certain times only, such as the end or beginning of the quarter). After creating these workload views, WXM automatically analyzes the workloads and assigns a “cloud friendliness” score to the workload views. The cloud friendliness score tells the pharma which workloads can be optimally moved to the cloud. The critical LoB perpetual users can be burst to the cloud and can stay there, protected, and isolated from all the activity happening on the on-premises data warehouse until their VIP status expires. As for the earnings report VIP periodic power users, they can continue to work in the on-premises data warehouse and during every quarter beginning or end, they are burst to the cloud to an environment sized to ensure their mission-critical workloads never miss their SLAs. The best part is that bursting these periodic VIP workloads can be put on a custom-defined schedule which automatically protects these users every time the schedule is triggered.

CDP’s Burst to the Cloud feature sets this pharma up for success by ensuring the smooth running of all their mission-critical workloads without affecting the rest of the business.

Conclusion

There are many scenarios where your business can benefit greatly by bursting workloads to the cloud with CDP. You might have a highly seasonal business like tax advisors. You might have heavy seasonal spikes in your business like retailers that need to offset that extra burden caused by peak demands. On the other hand, your organization might be distributed across geographies typical of the manufacturing industry where the peak demand per region might be cyclic. Or your data center may be under continuous pressure that is systemic to the nature of your business like data aggregators or healthcare organizations.

These underlying needs are common across all these scenarios:

These are all driving factors that necessitate having the ability to Burst to Cloud with CDP, making your business successful!