Having a good grasp of HDFS recovery processes is important when running or moving toward production-ready Apache Hadoop.

An important design requirement of HDFS is to ensure continuous and correct operations to support production deployments. One particularly complex area is ensuring correctness of writes to HDFS in the presence of network and node failures, where the lease recovery, block recovery, and pipeline recovery processes come into play. Understanding when and why these recovery processes are called, along with what they do, can help users as well as developers understand the machinations of their HDFS cluster.

In this blog post, you will get an in-depth examination of these recovery processes. We’ll start with a quick introduction to the HDFS write pipeline and these recovery processes, explain the important concepts of block/replica states and generation stamps, then step through each recovery process. Finally, we’ll conclude by listing several relevant issues, both resolved and open.

This post is divided into two parts: Part 1 will examine the details of lease recovery and block recovery, and Part 2 will examine the details of pipeline recovery. Readers interested in learning more should refer the design specification: Append, Hflush, and Read for implementation details.

Background

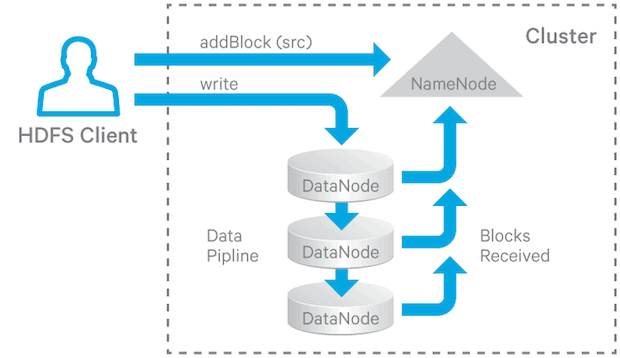

In HDFS, files are divided into blocks, and file access follows multi-reader, single-writer semantics. To meet the fault-tolerance requirement, multiple replicas of a block are stored on different DataNodes. The number of replicas is called the replication factor. When a new file block is created, or an existing file is opened for append, the HDFS write operation creates a pipeline of DataNodes to receive and store the replicas. (The replication factor generally determines the number of DataNodes in the pipeline.) Subsequent writes to that block go through the pipeline (Figure 1).

Figure 1. HDFS Write Pipeline

For read operations the client chooses one of the DataNodes holding copies of the block and requests a data transfer from it.

Below are two application scenarios highlighting the need for the fault-tolerance design requirement:

- HBase’s Region Server (RS) writes to its WAL (Write Ahead Log), which is an HDFS file that helps to prevent data loss. If an RS goes down, a new one will be started and it will reconstruct the state of the predecessor RS by reading the WAL file. If the write pipeline was not finished when the RS died, then different DataNodes in the pipeline may not be in sync. HDFS must ensure that all of the necessary data is read from WAL file to reconstruct the correct RS state.

- When a Flume client is streaming data to an HDFS file, it must be able to write continuously, even if some DataNodes in the pipeline fail or stop responding.

Lease recovery, block recovery, and pipeline recovery come into play in this type of situation:

- Before a client can write an HDFS file, it must obtain a lease, which is essentially a lock. This ensures the single-writer semantics. The lease must be renewed within a predefined period of time if the client wishes to keep writing. If a lease is not explicitly renewed or the client holding it dies, then it will expire. When this happens, HDFS will close the file and release the lease on behalf of the client so that other clients can write to the file. This process is called lease recovery.

- If the last block of the file being written is not propagated to all DataNodes in the pipeline, then the amount of data written to different nodes may be different when lease recovery happens. Before lease recovery causes the file to be closed, it’s necessary to ensure that all replicas of the last block have the same length; this process is known as block recovery. Block recovery is only triggered during the lease recovery process, and lease recovery only triggers block recovery on the last block of a file if that block is not in COMPLETE state (defined in later section).

- During write pipeline operations, some DataNodes in the pipeline may fail. When this happens, the underlying write operations can’t just fail. Instead, HDFS will try to recover from the error to allow the pipeline to keep going and the client to continue to write to the file. The mechanism to recover from the pipeline error is called pipeline recovery.

The following sections will explain these processes in more details.

Blocks, Replicas, and Their States

To differentiate between blocks in the context of the NameNode and blocks in the context of the DataNode, we will refer to the former as blocks, and the latter as replicas.

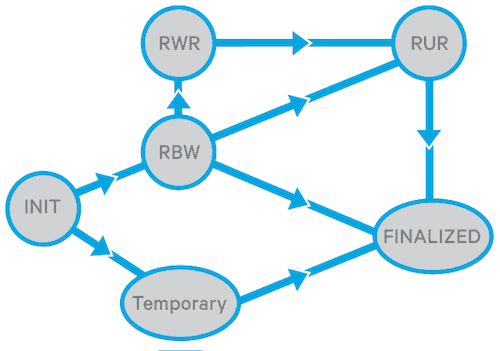

A replica in the DataNode context can be in one of the following states (see enum ReplicaState in org.apache.hadoop.hdfs.server.common.HdfsServerConstants.java):

- FINALIZED: when a replica is in this state, writing to the replica is finished and the data in the replica is “frozen” (the length is finalized), unless the replica is re-opened for append. All finalized replicas of a block with the same generation stamp (referred to as the GS and defined below) should have the same data. The GS of a finalized replica may be incremented as a result of recovery.

- RBW (Replica Being Written): this is the state of any replica that is being written, whether the file was created for write or re-opened for append. An RBW replica is always the last block of an open file. The data is still being written to the replica and it is not yet finalized. The data (not necessarily all of it) of an RBW replica is visible to reader clients. If any failures occur, an attempt will be made to preserve the data in an RBW replica.

- RWR (Replica Waiting to be Recovered): If a DataNode dies and restarts, all its RBW replicas will be changed to the RWR state. An RWR replica will either become outdated and therefore discarded, or will participate in lease recovery.

- RUR (Replica Under Recovery): A non-TEMPORARY replica will be changed to the RUR state when it is participating in lease recovery.

- TEMPORARY: a temporary replica is created for the purpose of block replication (either by replication monitor or cluster balancer). It’s similar to an RBW replica, except that its data is invisible to all reader clients. If the block replication fails, a TEMPORARY replica will be deleted.

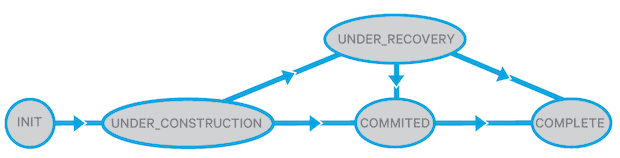

A block in the NameNode context may be in one the following states (see enum BlockUCState in org.apache.hadoop.hdfs.server.common.HdfsServerConstants.java):

- UNDER_CONSTRUCTION: this is the state when it is being written to. An UNDER_CONSTRUCTION block is the last block of an open file; its length and generation stamp are still mutable, and its data (not necessarily all of it) is visible to readers. An UNDER_CONSTRUCTION block in the NameNode keeps track of the write pipeline (the locations of valid RBW replicas), and the locations of its RWR replicas.

- UNDER_RECOVERY: If the last block of a file is in UNDER_CONSTRUCTION state when the corresponding client’s lease expires, then it will be changed to UNDER_RECOVERY state when block recovery starts.

- COMMITTED: COMMITTED means that a block’s data and generation stamp are no longer mutable (unless it is reopened for append), and there are fewer than the minimal-replication number of DataNodes that have reported FINALIZED replicas of same GS/length. In order to service read requests, a COMMITTED block must keep track of the locations of RBW replicas, the GS and the length of its FINALIZED replicas. An UNDER_CONSTRUCTION block is changed to COMMITTED when the NameNode is asked by the client to add a new block to the file, or to close the file. If the last or the second-to-last blocks are in COMMITTED state, the file cannot be closed and the client has to retry.

- COMPLETE: A COMMITTED block changes to COMPLETE when the NameNode has seen the minimum replication number of FINALIZED replicas of matching GS/length. A file can be closed only when all its blocks become COMPLETE. A block may be forced to the COMPLETE state even if it doesn’t have the minimal replication number of replicas, for example, when a client asks for a new block, and the previous block is not yet COMPLETE.

DataNodes persist a replica’s state to disk, but the NameNode doesn’t persist the block state to disk. When the NameNode restarts, it changes the state of the last block of any previously open file to the UNDER_CONSTRUCTION state, and the state of all the other blocks to COMPLETE.

Simplified state transition diagrams of replica and block are shown in Figures 2 and 3. See the design document for more detailed ones.

Figure 2: Simplified Replica State Transition

Figure 3. Simplified Block State Transition

Generation Stamp

A GS is a monotonically increasing 8-byte number for each block that is maintained persistently by the NameNode. The GS for a block and replica (Design Specification: HDFS Append and Truncates) is introduced for the following purposes:

- Detecting stale replica(s) of a block: that is, when the replica GS is older than the block GS, which might happen when, for example, an append operation is somehow skipped at the replica.

- Detecting outdated replica(s) on DataNodes which have been dead for long time and rejoin the cluster.

A new GS is needed when any of the following occur:

- A new file is created

- A client opens an existing file for append or truncate

- A client encounters an error while writing data to the DataNode(s) and requests a new GS

- The NameNode initiates lease recovery for a file

Lease Recovery and Block Recovery

Lease Manager

The leases are managed by the lease manager at the NameNode. The NameNode tracks the files each client has open for write. It is not necessary for a client to enumerate each file it has opened for write when renewing leases. Instead, it periodically sends a single request to the NameNode to renew all of them at once. (The request is an org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos.RenewLeaseResponseProto message, which is an RPC protocol between HDFS client and NameNode.)

Each NameNode manages a single HDFS namespace, each of which has a single lease manager to manage all the client leases associated with that namespace. Federated HDFS clusters may have multiple namespaces, each with its own lease manager.

The lease manager maintains a soft limit (1 minute) and hard limit (1 hour) for the expiration time (these limits are currently non-configurable), and all leases maintained by the lease manager abide by the same soft and hard limits. Before the soft limit expires, the client holding the lease of a file has exclusive write access to the file. If the soft limit expires and the client has not renewed the lease or closed the file (the lease of a file is released when the file is closed), another client can forcibly take over the lease. If the hard limit expires and the client has not renewed the lease, HDFS assumes that the client has quit and will automatically close the file on behalf of the client, thereby recovering the lease.

The fact that the lease of a file is held by one client does not prevent other clients from reading the file, and a file may have many concurrent readers, even while a client is writing to it.

Operations that the lease manager supports include:

- Adding a lease for a client and path (if the client already has a lease, it adds the path to the lease, otherwise, it creates a new lease and adds the path to the lease)

- Removing a lease for a client and path (if it’s the last path in the lease, it removes the lease)

- Checking whether the soft and/or hard limits have expired, and

- Renewing the lease for a given client.

The lease manager has a monitor thread that periodically (currently every 2 seconds) checks whether any lease has an expired hard limit, and if so, it will trigger the lease recovery process for the files in these leases.

An HDFS client renews its leases via the org.apache.hadoop.hdfs.LeaseRenewer.LeaseRenewer class which maintains a list of users and runs one thread per user per NameNode. This thread periodically checks in with the NameNode and renews all of the client’s leases when the lease period is half over.

(Note: An HDFS client is only associated with one NameNode; see constructors of org.apache.hadoop.hdfs.DFSClient). If the same application wants to access different files managed by different NameNodes in a federated cluster, then one client needs to be created for each NameNode.)

Lease Recovery Process

The lease recovery process is triggered on the NameNode to recover leases for a given client, either by the monitor thread upon hard limit expiry, or when a client tries to take over lease from another client when the soft limit expires. It checks each file open for write by the same client, performs block recovery for the file if the last block of the file is not in COMPLETE state, and closes the file. Block recovery of a file is only triggered when recovering the lease of a file.

Below is the lease recovery algorithm for given file f. When a client dies, the same algorithm is applied to each file the client opened for write.

- Get the DataNodes which contain the last block of f.

- Assign one of the DataNodes as the primary DataNode p.

- p obtains a new generation stamp from the NameNode.

- p gets the block info from each DataNode.

- p computes the minimum block length.

- p updates the DataNodes, which have a valid generation stamp, with the new generation stamp and the minimum block length.

- p acknowledges the NameNode the update results.

- NameNode updates the BlockInfo.

- NameNode remove f’s lease (other writer can now obtain the lease for writing to f).

- NameNode commit changes to edit log.

Steps 3 through 7 are the block recovery parts of the algorithm. If a file needs block recovery, the NameNode picks a primary DataNode that has a replica of the last block of the file, and tells this DataNode to coordinate the block recovery work with other DataNodes. That DataNode reports back to the NameNode when it is done. The NameNode then updates its internal state of this block, removes the lease, and commits the change to edit log.

Sometimes, administrator needs to recover the lease of a file forcibly before the hard limit expires. A CLI debug command is available (starting from Hadoop release 2.7 and CDH 5.3) for this purpose:

hdfs debug recoverLease [-path ] [-retries ]

Conclusion

Lease recovery, block recovery, and pipeline recovery are essential to HDFS fault-tolerance. Together, they ensure that writes are durable and consistent in HDFS, even in the presence of network and node failures.

You should now have a better understanding of when and why lease recovery and block recovery are invoked, and what they do. In Part 2, we’ll explore pipeline recovery.

Yongjun Zhang is a Software Engineer at Cloudera.

can force recovery lease in soft limit