In October 2020, Cloudera made a strategic acquisition of a company called Eventador. This was primarily to augment our streaming capabilities within Cloudera DataFlow. Eventador was adept at simplifying the process of building streaming applications. Their flagship product, SQL Stream Builder, made access to real-time data streams easily possible with just SQL (Structured Query Language). Cloudera’s customers were struggling to solve the same challenge – to query high-volumes of real-time data streams with something as simple as SQL.

Today, within 5 months after the acquisition of Eventador, we are very happy to announce that SQL Stream Builder is now being re-launched as Cloudera SQL Stream Builder. This is being done after it was fully integrated with Cloudera Data Platform’s (CDP) Shared Data Experience (SDX). This means that SQL Stream Builder can take advantage of the same unified security and governance as the rest of the platform does, using SDX.

What is SQL Stream Builder?

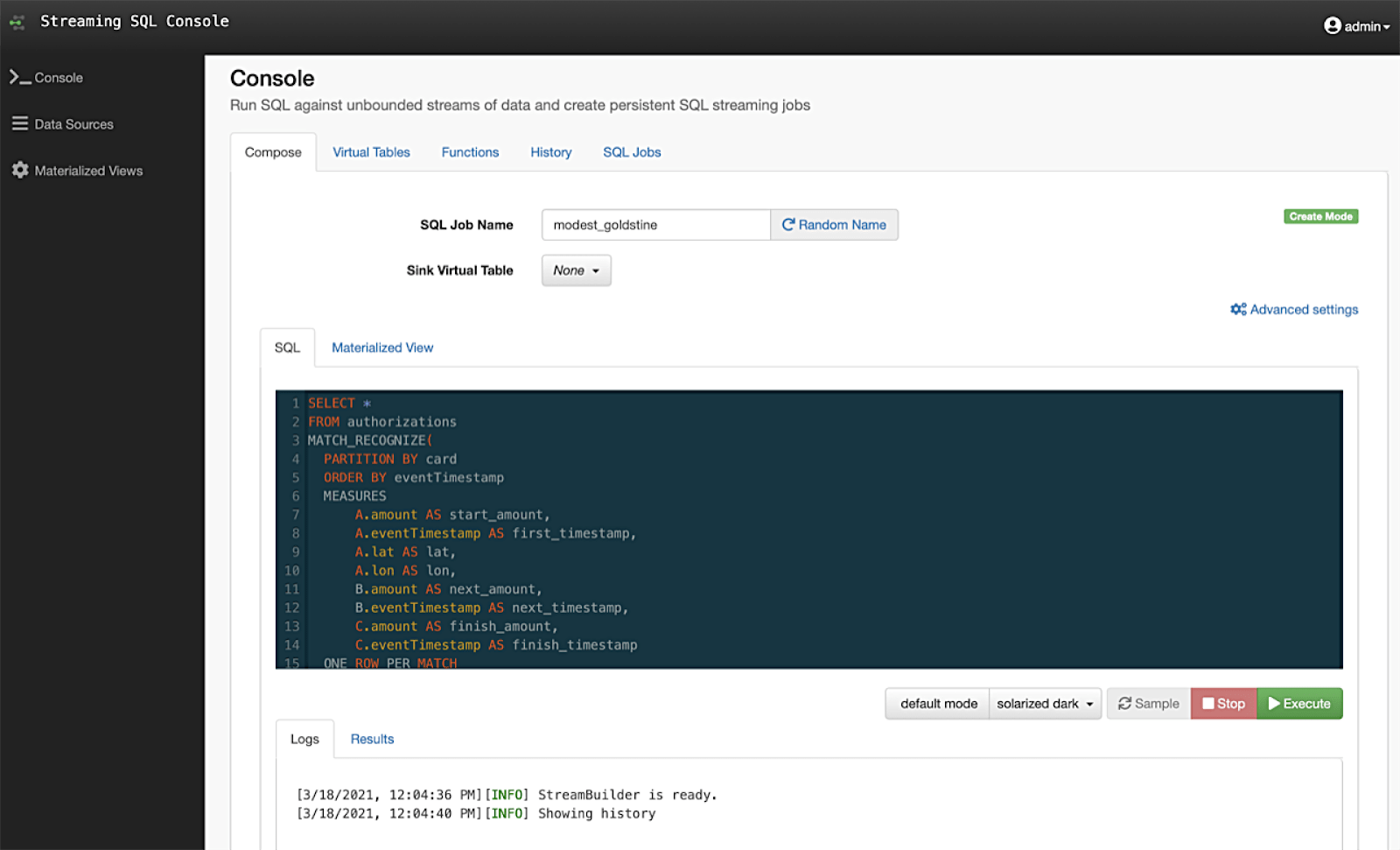

Cloudera SQL Stream Builder now augments the powerful stream processing capabilities of the Cloudera DataFlow (CDF) streaming platform. It offers a slick user interface for writing SQL queries to run against real-time data streams in Apache Kafka or Apache Flink. This enables developers, data analysts and data scientists to write streaming applications using just SQL. They no longer have to depend on any skilled Java or Scala developers to write special programs to gain access to such data streams.

SQL Stream Builder continuously runs SQL via Flink. It offers syntax checking, error reporting, schema detection, query creation, sampling results and creating outputs with its simple yet intuitive user interface. It also provides an advanced materialized view engine to enable live aggregated datasets to be accessible by other applications via a simple REST API.

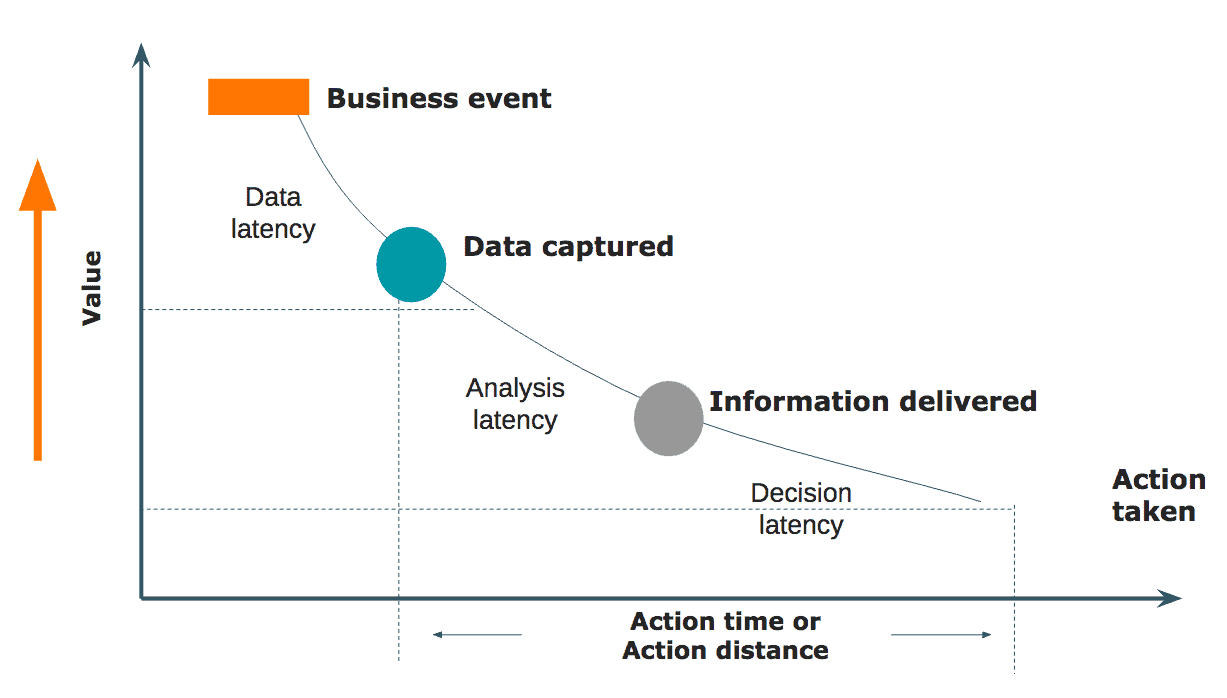

Data decays

Yes, data has a shelf life. And in today’s business environments, the data you receive must be instantaneously processed to understand the business impact and act upon it. A streaming analytics solution is no good if you can just ingest all the data in real-time but are unable to harness the value of what the data means to you. Imagine a manufacturer receiving streams of data with millions of messages a day from their dozen or more manufacturing plants. If they need to understand where a specific surge of streams is coming from or if they need to detect a specific anomaly in the streams, they should be able to query the streams in real-time. They cannot afford to send it all into a data store and then analyze it the next day to find such actionable insights. The data is of no value the next day for this purpose. To execute such real-time queries, the skills are typically in the hands of a select few in the organization who possess unique skills like Scala or Java and can write code to get such insights. This is not a scalable model.

SQL is a universal language

For more than three decades, SQL has been an accepted way to conduct queries across a range of database systems. SQL is also one of the most popular skillsets amongst the key enterprise data personas. With data analysts and data scientists struggling to gain access to real-time data streams easily, SQL becomes an easy choice for the task. However, there is a key challenge. Unlike database tables which typically have a fixed number of rows at any given point in time, streams are unbounded. This means that they are continuous by nature and have no limit. They also don’t come in sequentially. Some messages can come in late or out of order too. This makes it challenging to adopt SQL as-is to query data streams.

Streaming SQL

Data streams have to be treated with tiny slices of time called “windows” e.g. last 5 seconds. Each message on the stream also has a timestamp that can be used to detect the order in which it should be processed. So, using SQL as the base construct, a few additional keywords were added to handle data streams in the context of time windows. And thus was born Streaming SQL or Continuous SQL. They look and function like regular SQL but you also have a lot of additional constructs to allow you to group the streams over a specific time window. It also supports a range of aggregation functions so that you can perform various enrichment tasks on the streams like finding averages, sums, counts etc. This immediately allows the data analysts and data scientists in the organization to query data streams using SQL! This is what we call the democratization of real-time data within the organization.

Figure: SQL Stream Builder brings the simplicity of SQL to harness the value of real-time streaming data

Why should you be excited about SQL Stream Builder?

- Liberates access to real-time data for all user personas – Data analysts and data scientists can use SQL Stream Builder themselves to run ad hoc queries using SQL

- Simplifies building streaming applications – SQL Stream Builder offers an interactive user interface that supports Streaming SQL. This allows users to run continuous queries on data streams over specific time windows. You can also join multiple data streams and perform aggregations.

- Exposes aggregated data streams to other applications – SQL Stream Builder allows you to create materialized views that can be easily exposed to other applications via REST APIs. This again liberates the value locked up in real-time data streams to more applications across the enterprise.

- Accelerates queries with minimal impact to core systems – The true power of SQL Stream Builder is in its underlying engine to make these queries execute extremely fast while not burdening the core systems that are holding such data streams, e.g. Kafka brokers that hold streams of data within Topics.

If you are curious to learn more about continuous SQL, download our new white paper. Or if you want to learn more about SQL Stream Builder, download our Tech Brief or the datasheet.

Even better, if you want to watch a live demo of this product, attend our webinar on March 30th, 2021 – Liberating real-time data access with the new SQL Stream Builder. Register NOW!